I'm trying to load a table from Oracle to Postgres using SSIS, with ~200 million records. Oracle, Postgres, and SSIS are on separate servers.

Reading data from Oracle

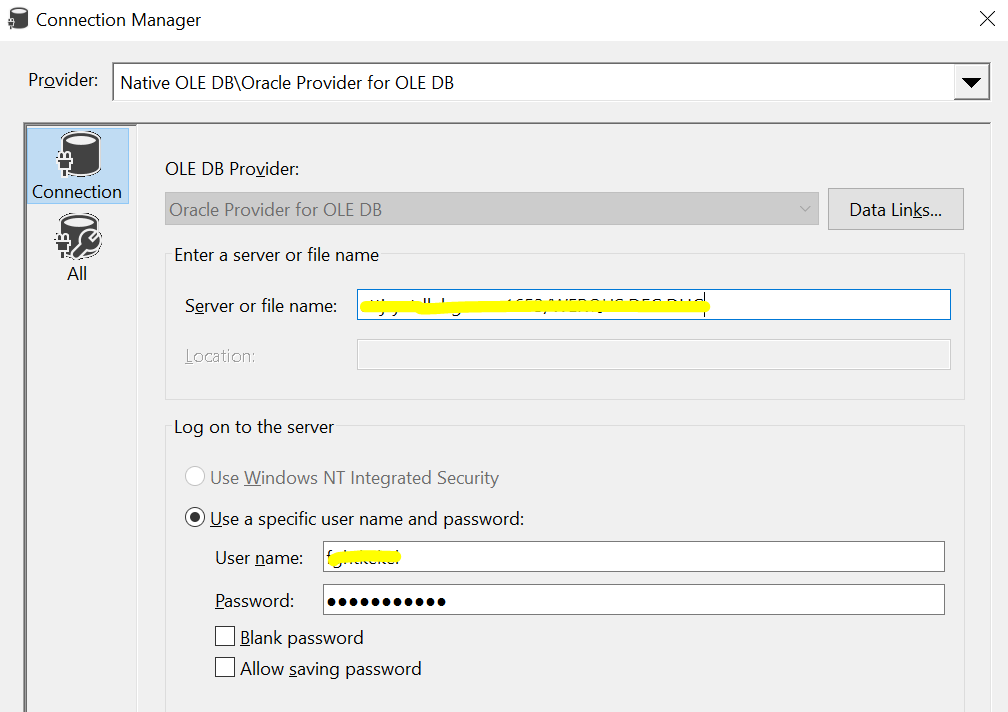

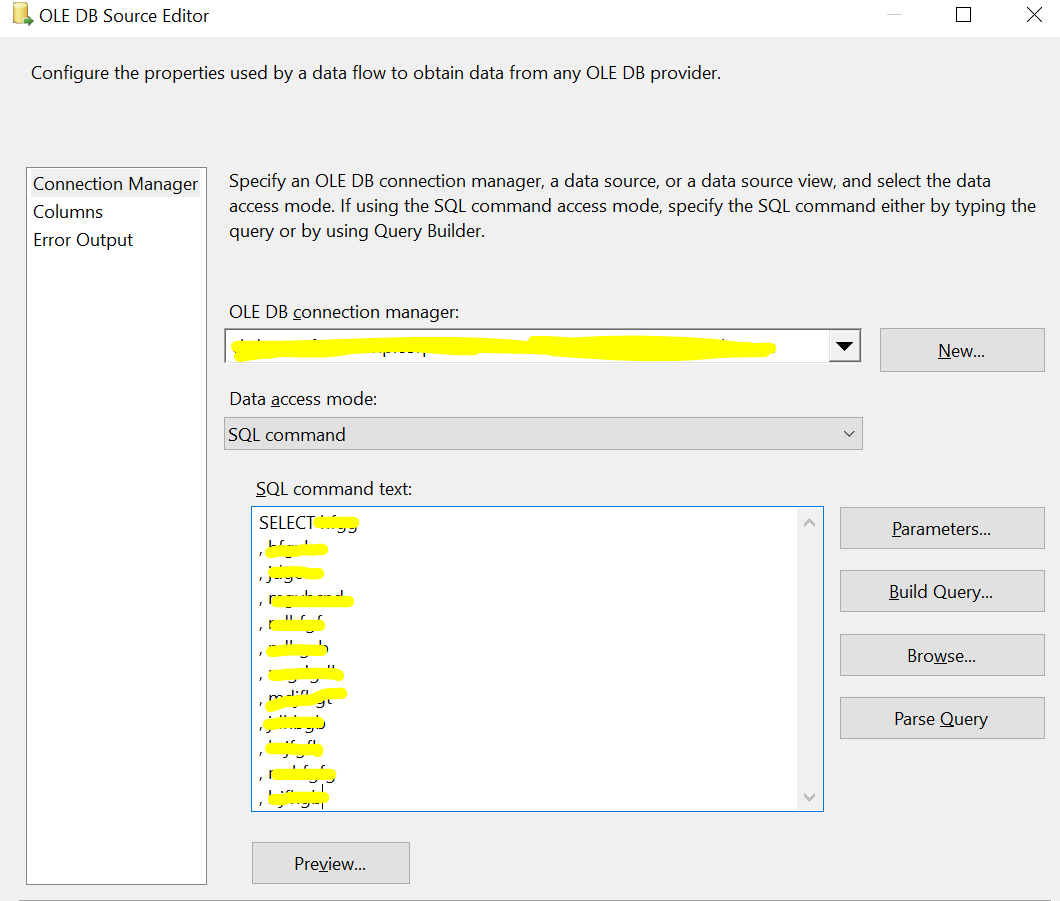

To read data from the Oracle database, I am using an OLE DB connection using "Oracle Provider for OLE DB". The OLE DB Source is configured to read data using an SQL Command.

In total there are 44 columns, mostly varchar, 11 numeric, and 3 timestamps.

Loading data into Postgres

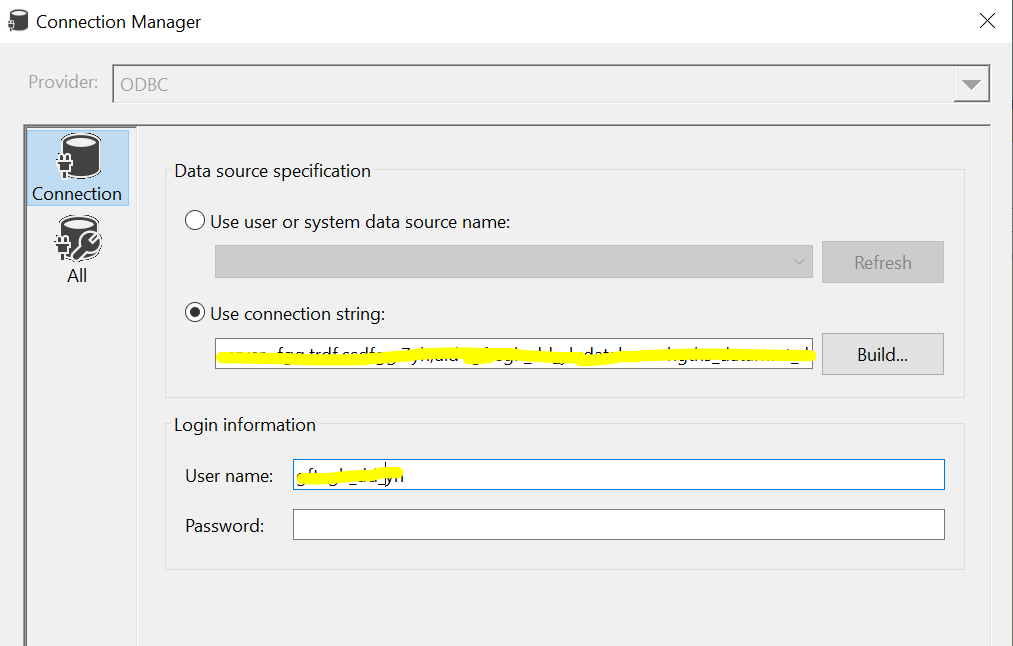

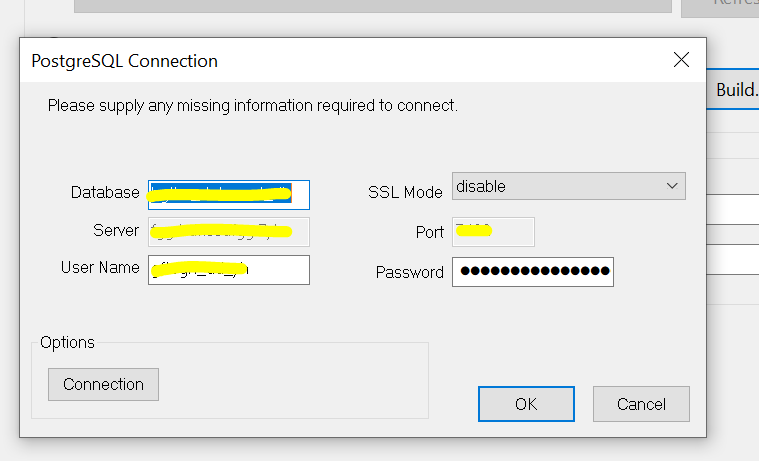

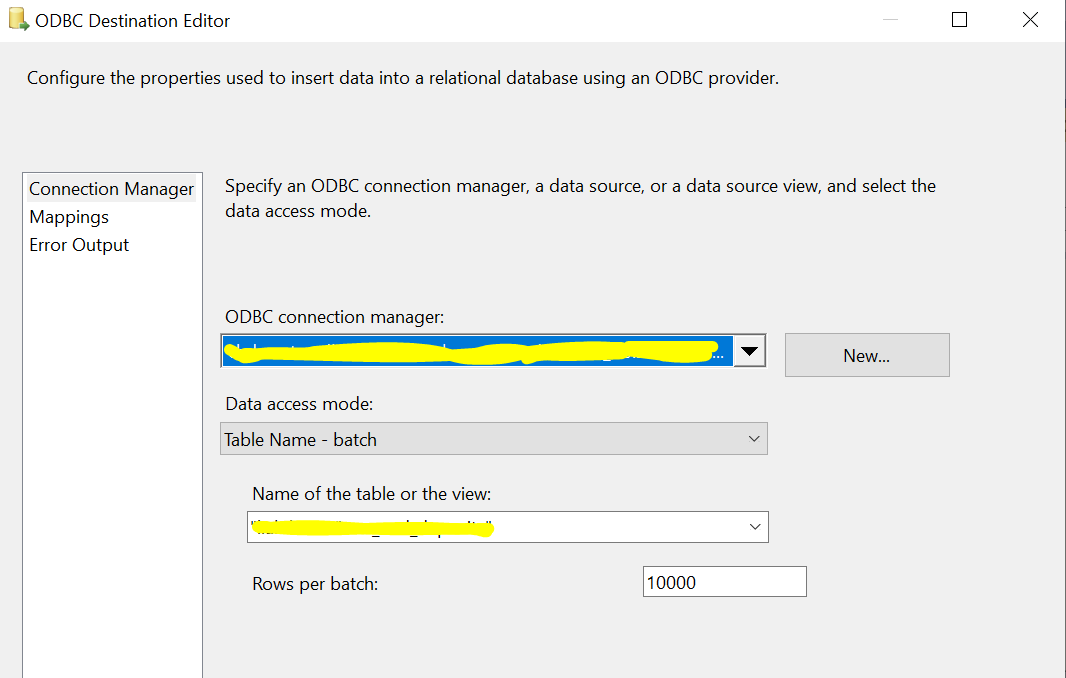

To lead data into Postgres, I am using an ODBC connection. The ODBC destination component is configured to load data in batch mode (not row-by-row insertion).

SSIS configuration

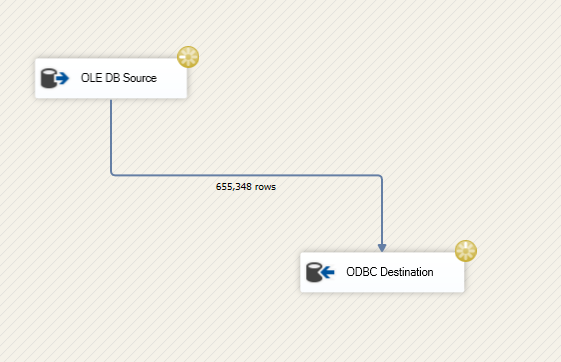

I created an SSIS package that only contains a straightforward Data Flow Task.

Issue

The load seems to take many hours to reach even a million count. The source query is giving results quickly while executing in SQL developer. But when I tried to export it threw limit exceeded error.

In SSIS, when I tried to preview the result of the Source SQL command it returned: The system cannot find message text for message number 0x80040e51 in the message file for OraOLEDB. (OraOLEDB)

Noting that the source(SQL command) and target table don't have any indexes.

Could you please suggest any methods to improve the load performance?

CodePudding user response:

I will try to give some tips to help you improve your package performance. You should start troubleshooting your package systematically to find the performance bottleneck.

Some provided links are related to SQL Server. No worries! The same rules are applied in all database management systems.

1. Available resources

First, you should ensure that you have sufficient resources to load the data from the source server into the destination server.

Ensure that the available memory on the source, ETL, and destination servers can handle the amount of data you are trying to load. Besides, make sure that your network connection bandwidth is not decreasing the data transfer performance.

Data flow task buffer size/limits

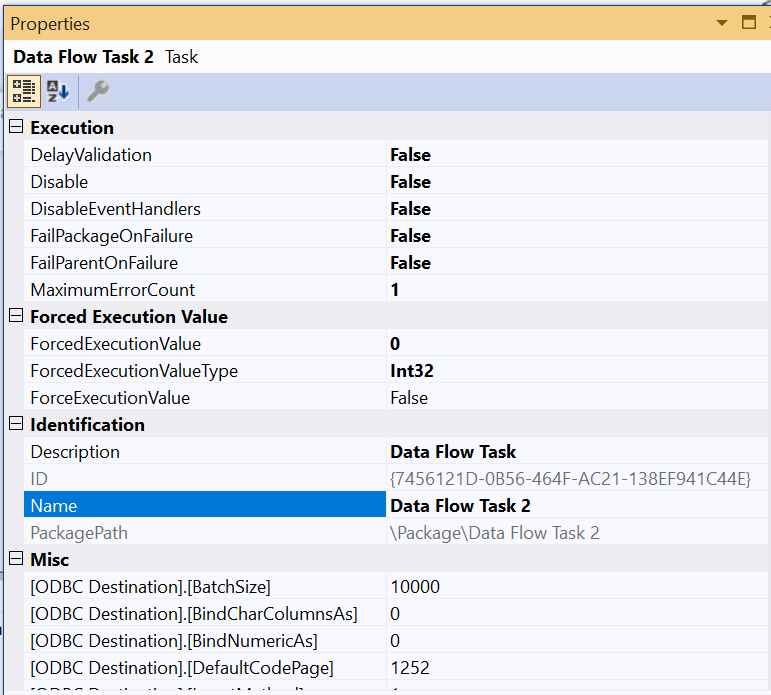

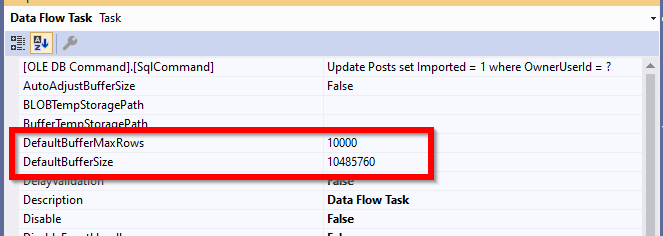

Using SSIS, data is loaded in memory while being transferred from source to destination. There are two properties in the data flow task that specifies how much data is transferred in memory buffers used by the SSIS pipelines.

Based on the following Integration Services performance tuning white paper:

DefaultMaxBufferRows – DefaultMaxBufferRows is a configurable setting of the SSIS Data Flow task that is automatically set at 10,000 records. SSIS multiplies the Estimated Row Size by the DefaultMaxBufferRows to get a rough sense of your dataset size per 10,000 records. You should not configure this setting without understanding how it relates to DefaultMaxBufferSize.

DefaultMaxBufferSize – DefaultMaxBufferSize is another configurable setting of the SSIS Data Flow task. The DefaultMaxBufferSize is automatically set to 10 MB by default. As you configure this setting, keep in mind that its upper bound is constrained by an internal SSIS parameter called MaxBufferSize which is set to 100 MB and can not be changed.

You should try to change those values and test your package performance each time you change them until the package performance increases.

4. Destination

Indexes/Triggers/Constraints

You should make sure that the destination table does not have any constraints or triggers since they significantly decrease the data load performance; each batch inserted should be validated or preprocessed before storing it.

- Are SQL Server database triggers evil?

- The benefits, costs, and documentation of database constraints

Besides, the more you have indexes the lower is the data load performance.

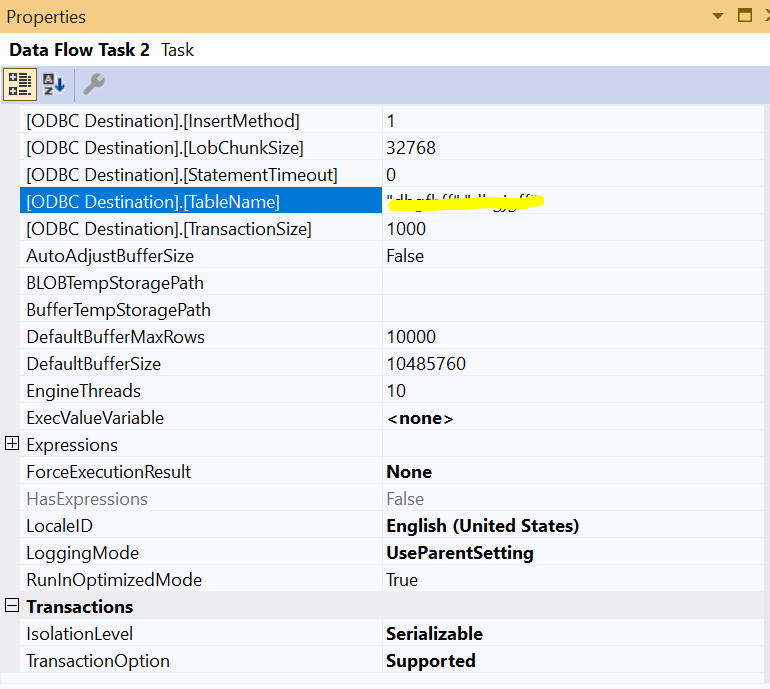

ODBC Destination custom properties

ODBC destination has several custom properties that can affect the data load performance such as

BatchSize(Rows per batch),TransactionSize(TransactionSize is only available in the advanced editor).