Here is my pandas dataframe:

df = pd.DataFrame({'Date': {0: '2016-10-11', 1: '2016-10-11', 2: '2016-10-11', 3: '2016-10-11', 4: '2016-10-11',5: '2016-10-12',6: '2016-10-12',7: '2016-10-12',8: '2016-10-12',9: '2016-10-12'}, 'Stock': {0: 'A', 1: 'B', 2: 'C', 3: 'D', 4: 'E', 5: 'F', 6: 'G', 7: 'H',8: 'I', 9:'J'}, 'Sector': {0: 0,1: 0, 2: 1, 3: 1, 4: 1, 5: 0, 6:0, 7:0, 8:1, 9:1}, 'Segment': {0: 0, 1: 1, 2: 1, 3: 1, 4: 1, 5: 1, 6:2,7:2,8:3,9:3}, 'Range': {0: 5, 1: 0, 2: 1, 3: 0, 4: 2, 5: 6, 6:0, 7:23, 8:5, 9:5}})

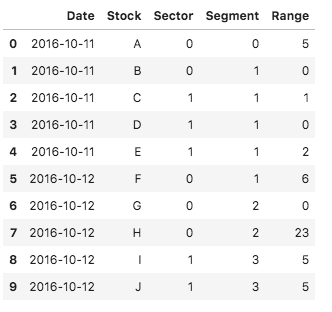

Here is how it looks:

I want to add the following columns:

- 'Date_Range_Avg': average of 'Range' grouped by Date

- 'Date_Sector_Range_Avg': average of 'Range' grouped by Date and Sector

- 'Date_Segment_Range_Avg': average of 'Range' grouped by Date and Segment

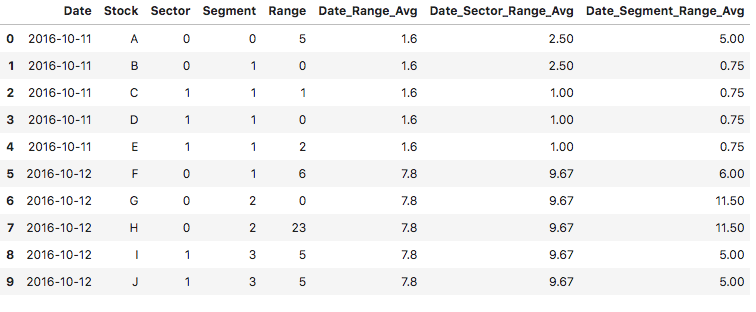

This would be the output:

res = pd.DataFrame({'Date': {0: '2016-10-11', 1: '2016-10-11', 2: '2016-10-11', 3: '2016-10-11', 4: '2016-10-11',5: '2016-10-12',6: '2016-10-12',7: '2016-10-12',8: '2016-10-12',9: '2016-10-12'}, 'Stock': {0: 'A', 1: 'B', 2: 'C', 3: 'D', 4: 'E', 5: 'F', 6: 'G', 7: 'H',8: 'I', 9:'J'}, 'Sector': {0: 0,1: 0, 2: 1, 3: 1, 4: 1, 5: 0, 6:0, 7:0, 8:1, 9:1}, 'Segment': {0: 0, 1: 1, 2: 1, 3: 1, 4: 1, 5: 1, 6:2,7:2,8:3,9:3}, 'Range': {0: 5, 1: 0, 2: 1, 3: 0, 4: 2, 5: 6, 6:0, 7:23, 8:5, 9:5}, 'Date_Range_Avg':{0: 1.6, 1: 1.6, 2: 1.6, 3: 1.6, 4: 1.6, 5: 7.8, 6: 7.8, 7: 7.8, 8:7.8, 9: 7.8}, 'Date_Sector_Range_Avg':{0: 2.5, 1: 2.5, 2: 1, 3: 1, 4: 1, 5: 9.67, 6: 9.67, 7: 9.67, 8: 9.67, 9: 9.67}, 'Date_Segment_Range_Avg':{0: 5, 1: 0.75, 2: 0.75, 3: 0.75, 4: 0.75, 5: 6, 6: 11.5, 7: 11.5, 8: 5, 9: 5}})

This is how it looks:

Note I have rounded some of the values - but this rounding is not essential for the question I have (please feel free to not round)

I'm aware that I can do each of these groupings separately but it strikes me as inefficient (my dataset contains millions of rows)

Essentially, I would like to first do a grouping by Date and then re-use it to do the two more fine-grained groupings by Date and Segment and by Date and Sector.

How to do this?

My initial hunch is to go like this:

day_groups = df.groupby("Date")

df['Date_Range_Avg'] = day_groups['Range'].transform('mean')

and then to re-use day_groups to do the 2 more fine-grained groupbys like this:

df['Date_Sector_Range_Avg'] = day_groups.groupby('Segment')[Range].transform('mean')

Which doesn't work as you get:

'AttributeError: 'DataFrameGroupBy' object has no attribute 'groupby''

CodePudding user response:

groupby runs really fast when the aggregate function is vectorized. If you are worried about performance, try it out first to see if it's the real bottleneck in your program.

You can create temporary data frames holding the result of each groupby, then successively merge them with df:

group_bys = {

"Date_Range_Avg": ["Date"],

"Date_Sector_Range_Avg": ["Date", "Sector"],

"Date_Segment_Range_Avg": ["Date", "Segment"]

}

tmp = [

df.groupby(columns)["Range"].mean().to_frame(key)

for key, columns in group_bys.items()

]

result = df

for t in tmp:

result = result.merge(t, left_on=t.index.names, right_index=True)

Result:

Date Stock Sector Segment Range Date_Range_Avg Date_Sector_Range_Avg Date_Segment_Range_Avg

0 2016-10-11 A 0 0 5 1.6 2.500000 5.00

1 2016-10-11 B 0 1 0 1.6 2.500000 0.75

2 2016-10-11 C 1 1 1 1.6 1.000000 0.75

3 2016-10-11 D 1 1 0 1.6 1.000000 0.75

4 2016-10-11 E 1 1 2 1.6 1.000000 0.75

5 2016-10-12 F 0 1 6 7.8 9.666667 6.00

6 2016-10-12 G 0 2 0 7.8 9.666667 11.50

7 2016-10-12 H 0 2 23 7.8 9.666667 11.50

8 2016-10-12 I 1 3 5 7.8 5.000000 5.00

9 2016-10-12 J 1 3 5 7.8 5.000000 5.00

Another option is to use transform, and avoid the multiple merges:

# reusing your code

group_bys = {

"Date_Range_Avg": ["Date"],

"Date_Sector_Range_Avg": ["Date", "Sector"],

"Date_Segment_Range_Avg": ["Date", "Segment"]

}

tmp = {key : df.groupby(columns)["Range"].transform('mean')

for key, columns in group_bys.items()

}

df.assign(**tmp)