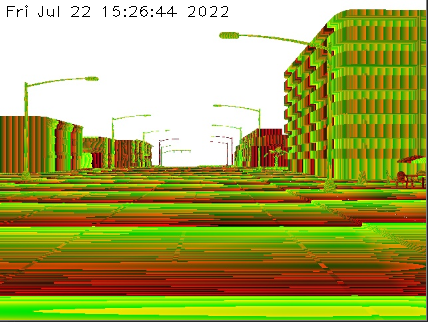

I am working with a simulation in Python equipped with a depth sensor. The visualization it's done in C . The sensor gives me the following image that I need to convert to gray.

For the conversion, I have the next formula:

normalized = (R G * 256 B * 256 * 256) / (256 * 256 * 256 - 1)

in_meters = 1000 * normalized

For converting the image to gray in C , I've written the following code:

cv::Mat ConvertRawToDepth(cv::Mat raw_image)

{

// raw_image.type() => CV_8UC3

// Extend raw image to 2 bytes per pixel

cv::Mat raw_extended = cv::Mat::Mat(raw_image.rows, raw_image.cols, CV_16UC3, raw_image.data);

// Split into channels

std::vector<cv::Mat> raw_ch(3);

cv::split(raw_image, raw_ch); // B, G, R

// Create and calculate 1 channel gray image of depth based on the formula

cv::Mat depth_gray = cv::Mat::zeros(raw_ch[0].rows, raw_ch[0].cols, CV_32FC1);

depth_gray = 1000.0 * (raw_ch[2] raw_ch[1] * 256 raw_ch[0] * 65536) / (16777215.0);

// Create final BGR image

cv::Mat depth_3d;

cv::cvtColor(depth_gray, depth_3d, cv::COLOR_GRAY2BGR);

return depth_3d;

}

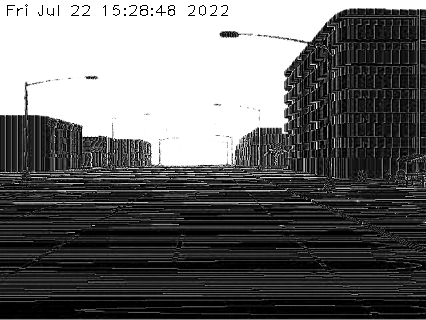

Achieving the next result:

If I do the conversion in python, I can simply write:

def convert_raw_to_depth(raw_image):

raw_image = raw_image[:, :, :3]

raw_image = raw_image.astype(np.float32)

# Apply (R G * 256 B * 256 * 256) / (256 * 256 * 256 - 1).

depth = np.dot(raw_image, [65536.0, 256.0, 1.0])

depth /= 16777215.0 # (256.0 * 256.0 * 256.0 - 1.0)

depth *= 1000

return depth

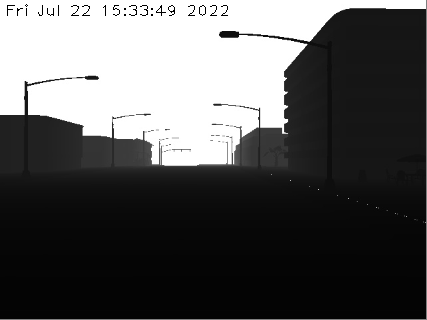

Achieving the next result:

It's clear that in python it's done better, but the formula is the same, the image is the same, then why is it a difference and how can I rewrite the code in C to give me similar results as in Python?

CodePudding user response:

It looks like you are dealing with np.float32 array in Python while CV_8UC3 array in C .

Try converting to CV_32FC3 before calculation.

// Convert to float and split into channels

cv::Mat raw_image_float;

raw_image.convertTo(raw_image_float, CV_32FC3);

std::vector<cv::Mat> raw_ch(3);

cv::split(raw_image_float, raw_ch); // B, G, R