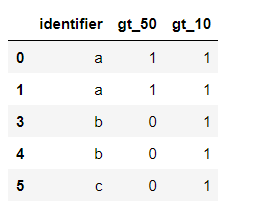

given the following df:

data = {'identifier': {0: 'a',

1: 'a',

3: 'b',

4: 'b',

5: 'c'},

'gt_50': {0: 1, 1: 1, 3: 0, 4: 0, 5: 0},

'gt_10': {0: 1, 1: 1, 3: 1, 4: 1, 5: 1}}

df = pd.DataFrame(data)

i want to find the nuniques of the column "identifier" for each column that starts with "gt_" and where the value is one.

Expected output:

- gt_50 1

- gt_10 3

I could make a for loop and filter the frame in each loop on one gt column and then count the uniques but I think it's not very clean. Is there a way to do this in a clean way?

CodePudding user response:

Use DataFrame.melt with filter _gt columns for unpivot, then get rows with 1 in DataFrame.query and last count unique values by DataFrameGroupBy.nunique:

out = (df.melt('identifier', value_vars=df.filter(regex='^gt_').columns)

.query('value == 1')

.groupby('variable')['identifier']

.nunique())

print (out)

variable

gt_10 3

gt_50 1

Name: identifier, dtype: int64

Or:

s = df.set_index('identifier').filter(regex='^gt_').stack()

out = s[s.eq(1)].reset_index().groupby('level_1')['identifier'].nunique()

print (df)

level_1

gt_10 3

gt_50 1

Name: identifier, dtype: int64