I am trying to run a celery task in a flask docker container and I am getting error like below when celery task is executed

web_1 | sock.connect(socket_address)

web_1 | OSError: [Errno 99] Cannot assign requested address

web_1 |

web_1 | During handling of the above exception, another exception occurred: **[shown below]**

web_1 | File "/opt/venv/lib/python3.8/site-packages/redis/connection.py", line 571, in connect

web_1 | raise ConnectionError(self._error_message(e))

web_1 | redis.exceptions.ConnectionError: Error 99 connecting to localhost:6379. Cannot assign requested address.

Without the celery task the application is working fine

docker-compose.yml

version: '3'

services:

web:

build: ./

volumes:

- ./app:/app

ports:

- "80:80"

environment:

- FLASK_APP=app/main.py

- FLASK_DEBUG=1

- 'RUN=flask run --host=0.0.0.0 --port=80'

depends_on:

- redis

redis:

container_name: redis

image: redis:6.2.6

ports:

- "6379:6379"

expose:

- "6379"

worker:

build:

context: ./

hostname: worker

command: "cd /app/routes && celery -A celery_tasks.celery worker --loglevel=info"

volumes:

- ./app:/app

links:

- redis

depends_on:

- redis

main.py

from flask import Flask

from instance import config, exts

from decouple import config as con

def create_app(config_class=config.Config):

app = Flask(__name__)

app.config.from_object(config.Config)

app.secret_key = con('flask_secret_key')

exts.mail.init_app(app)

from routes.test_route import test_api

app.register_blueprint(test_api)

return app

app = create_app()

if __name__ == "__main__":

app.run(host="0.0.0.0", debug=True, port=80)

I am using Flask blueprint for splitting the api routes

test_route.py

from flask import Flask, render_template, Blueprint

from instance.exts import celery

test_api = Blueprint('test_api', __name__)

@test_api.route('/test/<string:name>')

def testfnn(name):

task = celery.send_task('CeleryTask.reverse',args=[name])

return task.id

Celery tasks are also written in separate file

celery_tasks.py

from celery import Celery

from celery.utils.log import get_task_logger

from decouple import config

import time

celery= Celery('tasks',

broker = config('CELERY_BROKER_URL'),

backend = config('CELERY_RESULT_BACKEND'))

class CeleryTask:

@celery.task(name='CeleryTask.reverse')

def reverse(string):

time.sleep(25)

return string[::-1]

.env

CELERY_BROKER_URL = 'redis://localhost:6379/0'

CELERY_RESULT_BACKEND = 'redis://localhost:6379/0'

Dockerfile

FROM tiangolo/uwsgi-nginx:python3.8

RUN apt-get update

WORKDIR /app

ENV PYTHONUNBUFFERED 1

ENV VIRTUAL_ENV=/opt/venv

RUN python3 -m venv $VIRTUAL_ENV

ENV PATH="$VIRTUAL_ENV/bin:$PATH"

RUN python -m pip install --upgrade pip

COPY ./requirements.txt /app/requirements.txt

RUN pip install --no-cache-dir --upgrade -r /app/requirements.txt

COPY ./app /app

CMD ["python", "app/main.py"]

requirements.txt

Flask==2.0.3

celery==5.2.3

python-decouple==3.5

Flask-Mail==0.9.1

redis==4.0.2

SQLAlchemy==1.4.32

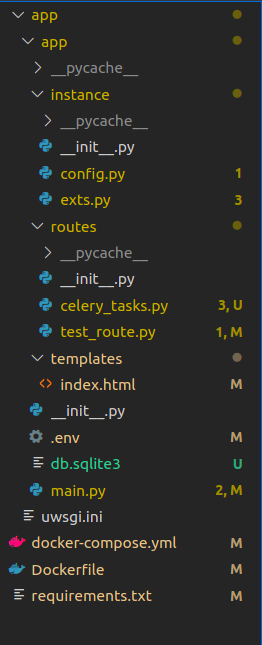

Folder Structure

Thanks in Advance

CodePudding user response:

In the end of your docker-compose.yml you can add:

networks:

your_net_name:

name: your_net_name

And in each container:

networks:

- your_net_name

These two steps will put all the containers at the same network. By default docker creates one, but as I've had problems letting them be auto-renamed, I think this approach gives you more control.

Finally I'd also change your env variable to use the container address:

CELERY_BROKER_URL=redis://redis_addr/0

CELERY_RESULT_BACKEND=redis://redis_addr/0

So you'd also add this section to your redis container:

hostname: redis_addr

This way the env var will get whatever address docker has assigned to the container.