I am working on an ELK stack setup I want to import data from a csv file from my PC to elasticsearch via logstash. Elasticsearch and Kibana is working properly.

Here is my logstash.conf file:

input {

file {

path => "C:/Users/aron/Desktop/es/archive/weapons.csv"

start_position => "beginning"

sincedb_path => "NUL"

}

}

filter {

csv {

separator => ","

columns => ["name", "type", "country"]

}

}

output {

elasticsearch {

hosts => ["http://localhost:9200/"]

index => "weapons"

document_type => "ww2_weapon"

}

stdout {}

}

And a sample row data from my .csv file looks like this:

| Name | Type | Country |

|---|---|---|

| 10.5 cm Kanone 17 | Field Gun | Germany |

German characters are all showing up.

I am running logstash via: logstash.bat -f path/to/logstash.conf

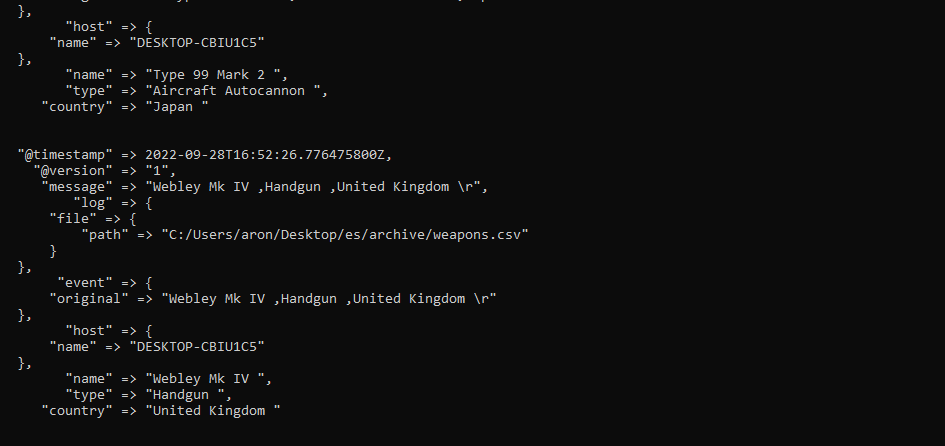

It starts working but it freezes and becomes unresponsive along the way, here is a screenshot of stdout

In kibana, it created the index and imported 2 documents but the data is all messed up. What am I doing wrong?

CodePudding user response:

If your task is only to import that CSV you better use the file upload in Kibana.

Should be available under the following link (for Kibana > v8):

<your Kibana domain>/app/home#/tutorial_directory/fileDataViz

Logstash is used if you want to do this job on a regular basis with new files coming in over time.

CodePudding user response:

You can try with below one. It is running perfectly on my machine.

input {

file {

path => "path/filename.csv"

start_position => "beginning"

sincedb_path => "NULL"

}

}

filter {

csv {

separator => ","

columns => ["field1","field2",...]

}

}

output {

stdout { codec => rubydebug }

elasticsearch {

hosts => "https://localhost:9200"

user => "username" ------> if any

password => "password" ------> if any

index => "indexname"

document_type => "doc_type"

}

}