Here are toy NumPy arrays:

nrow = 10

ar_label = np.arange(nrow**2).reshape(nrow, nrow)

ar_label[1:4, 1:4] = 100

ar_label[6:9, 2:5] = 200

ar_label[2:5, 6:9] = 300

ar_label = np.where(ar_label<100, np.nan, ar_label)

ar_label

array([[ nan, nan, nan, nan, nan, nan, nan, nan, nan, nan],

[ nan, 100., 100., 100., nan, nan, nan, nan, nan, nan],

[ nan, 100., 100., 100., nan, nan, 300., 300., 300., nan],

[ nan, 100., 100., 100., nan, nan, 300., 300., 300., nan],

[ nan, nan, nan, nan, nan, nan, 300., 300., 300., nan],

[ nan, nan, nan, nan, nan, nan, nan, nan, nan, nan],

[ nan, nan, 200., 200., 200., nan, nan, nan, nan, nan],

[ nan, nan, 200., 200., 200., nan, nan, nan, nan, nan],

[ nan, nan, 200., 200., 200., nan, nan, nan, nan, nan],

[ nan, nan, nan, nan, nan, nan, nan, nan, nan, nan]])

np.random.seed(11)

ar_rand = np.random.randint(0, nrow*3, size=nrow**2).reshape(nrow, nrow)

ar_rand = np.where(ar_rand==0, ar_rand, np.nan)

ar_rand

array([[nan, nan, nan, nan, nan, nan, nan, nan, nan, nan],

[nan, nan, nan, nan, nan, 0., nan, nan, nan, nan],

[nan, nan, nan, nan, nan, nan, nan, nan, nan, nan],

[nan, nan, nan, nan, nan, nan, nan, nan, 0., nan],

[nan, 0., nan, nan, nan, nan, nan, nan, nan, nan],

[nan, nan, nan, nan, nan, nan, 0., nan, nan, nan],

[nan, nan, nan, nan, nan, nan, nan, nan, nan, nan],

[nan, nan, nan, nan, nan, nan, 0., nan, nan, nan],

[nan, nan, nan, nan, nan, nan, nan, nan, nan, nan],

[nan, nan, 0., nan, nan, nan, nan, nan, nan, nan]])

Now, I want to replace zeros in ar_rand with the nearest (i.e., Euclidean distance using the two axes) non-nan value of the corresponding element in ar_label.

For example, the very left zero in ar_rand will be replaced with 100, the very bottom one will be replaced with 200, and so on.

A solution using NumPy or Xarray will be preferred, but ones using other libraries are also welcome.

A desired solution shouldn't depend on the specific distributions of non-nan values of ar_label as the real data I am playing with has a different distribution.

Thank you.

CodePudding user response:

The following method avoid loops at the expense of the required RAM memory.

First of all I defined a matrix containing, for each element of ar_rand, its row and col id:

ids = np.stack(

np.meshgrid(np.arange(ar_label.shape[0]), np.arange(ar_label.shape[1]))

).T #shape (10, 10, 2)

After that I computed all possible ids euclidean distances (basically between all possible pairs in the matrix):

euclidean_ids_distances = np.sqrt(((ids.reshape(-1, 2)[None,:]-ids.reshape(-1, 2)[:,None,:])**2).sum(-1)).reshape(*ar_label.shape,*ar_label.shape)

#shape (10, 10, 10, 10)

The above matrix is quite large and would cause memory problems for bigger nrow. Maybe it is a bit confusing, but it's simpler then it seems. In practice if we want to see the euclidean distances between the element [0,0] and all the other ones, we can find them in euclidean_ids_distances[0,0]:

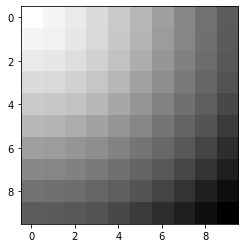

plt.imshow(euclidean_ids_distances[0,0], cmap="Greys")

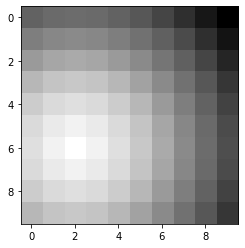

Same thing for the element [6,2] (for example):

In this way, for each non-null element from ar_rand, I could find the argmin distance in euclidean_ids_distances matrix considering only non-null ar_label ids:

label_ids = ids[~np.isnan(ar_label)] [euclidean_ids_distances[~np.isnan(ar_rand)][:,~np.isnan(ar_label)].argmin(-1)]

#shape (6, 2)

#where 6 is the number of non-null ar_rand elements, 2 is the couple of coordinates (row and col)

Finally I created a copy of ar_rand and replaced the non-null values with the values in the ar_label specified in the label_ids

ar_rand_copy = ar_rand.copy()

ar_rand_copy[~np.isnan(ar_rand_copy)] = ar_label[label_ids[:,0], label_ids[:,1]]

# array([[ nan, nan, nan, nan, nan, nan, nan, nan, nan, nan],

# [ nan, nan, nan, nan, nan, 300., nan, nan, nan, nan],

# [ nan, nan, nan, nan, nan, nan, nan, nan, nan, nan],

# [ nan, nan, nan, nan, nan, nan, nan, nan, 300., nan],

# [ nan, 100., nan, nan, nan, nan, nan, nan, nan, nan],

# [ nan, nan, nan, nan, nan, nan, 300., nan, nan, nan],

# [ nan, nan, nan, nan, nan, nan, nan, nan, nan, nan],

# [ nan, nan, nan, nan, nan, nan, 200., nan, nan, nan],

# [ nan, nan, nan, nan, nan, nan, nan, nan, nan, nan],

# [ nan, nan, 200., nan, nan, nan, nan, nan, nan, nan]])

CodePudding user response:

Here is my solution. The logic is:

- create a NumPy array that contains the distance between a zero element of

ar_randand all other non-nan elements ofar_label - get the coordinates of the element of

ar_labelwhich has the shortest distance - replace the element of

ar_landwith the element ofar_labelat the coordinates from 2 - iterate 1-3 over all zero elements

, which is written as:

i_rand, j_rand = np.where(ar_rand == 0.0)

i_label, j_label = np.where(ar_label==ar_label)

ar_rand_rep = np.zeros_like(ar_rand) * np.nan

for n in range(len(i_rand)): # apply over grids to be replaced

ar_dist = np.array([np.sqrt((i_rand[n] - i_label[i])**2 (j_rand[n] - j_label[i])**2) for i in range(len(i_label))])

argsort = ar_dist.argsort()

ar_dist = np.take_along_axis(ar_dist, argsort, axis=0)

i_label = np.take_along_axis(i_label, argsort, axis=0)

j_label = np.take_along_axis(j_label, argsort, axis=0)

ar_rand_rep[i_rand[n], j_rand[n]] = ar_label[i_label[0], j_label[0]]

ar_rand_rep

array([[ nan, nan, nan, nan, nan, nan, nan, nan, nan, nan],

[ nan, nan, nan, nan, nan, 300., nan, nan, nan, nan],

[ nan, nan, nan, nan, nan, nan, nan, nan, nan, nan],

[ nan, nan, nan, nan, nan, nan, nan, nan, 300., nan],

[ nan, 100., nan, nan, nan, nan, nan, nan, nan, nan],

[ nan, nan, nan, nan, nan, nan, 300., nan, nan, nan],

[ nan, nan, nan, nan, nan, nan, nan, nan, nan, nan],

[ nan, nan, nan, nan, nan, nan, 200., nan, nan, nan],

[ nan, nan, nan, nan, nan, nan, nan, nan, nan, nan],

[ nan, nan, 200., nan, nan, nan, nan, nan, nan, nan]])

Other solutions are of course welcome, especially ones without a loop.

CodePudding user response:

You can try this:

import numpy as np

nrow = 10

ar_label = np.arange(nrow**2).reshape(nrow, nrow)

ar_label[1:4, 1:4] = 100

ar_label[6:9, 2:5] = 200

ar_label[2:5, 6:9] = 300

ar_label = np.where(ar_label<100, np.nan, ar_label)

np.random.seed(11)

ar_rand = np.random.randint(0, nrow*3, size=nrow**2).reshape(nrow, nrow)

ar_rand = np.where(ar_rand==0, ar_rand, np.nan)

def distance2d(xp, yp, x, y):

return np.hypot(x - xp, y - yp)

def unstack(a, axis):

return np.moveaxis(a, axis, 0)

yy,xx = np.mgrid[0:10, 0:10]

z = np.dstack((yy,xx)) # coordinates

blanks = z[~np.isnan(ar_rand)]

#print(blanks)

values = z[~np.isnan(ar_label)]

xv, yv = unstack(values, -1)

# distance between blanks and values only

# saves memory

for p in blanks:

xb,yb = p

id = distance2d(xb, yb, xv, yv).argmin()

xc,yc = values[id]

ar_rand[xb,yb] = ar_label[xc,yc]

print(ar_rand)

The code can be vectorized using numba for large arrays.

CodePudding user response:

Another way you could go that might be faster and doesn't reinvent the wheel as much is to use scipy.interpolate.NearestNDInterpolator.

ar_label_finite = np.isfinite(ar_label)

interpolant = scipy.interpolate.NearestNDInterpolator(

x=np.argwhere(ar_label_finite),

y=ar_label[ar_label_finite],

)

mask = ar_rand == 0

ar_rand_replaced = ar_rand.copy()

ar_rand_replaced[mask] = interpolant(*np.nonzero(mask))

print(ar_rand_replaced)

which outputs

[[ nan nan nan nan nan nan nan nan nan nan]

[ nan nan nan nan nan 300. nan nan nan nan]

[ nan nan nan nan nan nan nan nan nan nan]

[ nan nan nan nan nan nan nan nan 300. nan]

[ nan 100. nan nan nan nan nan nan nan nan]

[ nan nan nan nan nan nan 300. nan nan nan]

[ nan nan nan nan nan nan nan nan nan nan]

[ nan nan nan nan nan nan 200. nan nan nan]

[ nan nan nan nan nan nan nan nan nan nan]

[ nan nan 200. nan nan nan nan nan nan nan]]