I have implemented a recurrent neural network autoencoder as below:

def AE_GRU(X):

inputs = Input(shape=(X.shape[1], X.shape[2]), name="input")

L1 = GRU(8, activation="relu", return_sequences=True, kernel_regularizer=regularizers.l2(0.00), name="E1")(inputs)

L2 = GRU(4, activation="relu", return_sequences=False, name="E2")(L1)

L3 = RepeatVector(X.shape[1], name="RepeatVector")(L2)

L4 = GRU(4, activation="relu", return_sequences=True, name="D1")(L3)

L5 = GRU(8, activation="relu", return_sequences=True, name="D2")(L4)

output = TimeDistributed(Dense(X.shape[2]), name="output")(L5)

model = Model(inputs=inputs, outputs=[output])

return model

and after that I am running the below code to train the AE:

model = AE_GRU(trainX)

optimizer = tf.keras.optimizers.Adam(learning_rate=0.01)

model.compile(optimizer=optimizer, loss="mse")

model.summary()

epochs = 5

batch_size = 64

history = model.fit(

trainX, trainX,

epochs=epochs, batch_size=batch_size,

validation_data=(valX, valX)

).history

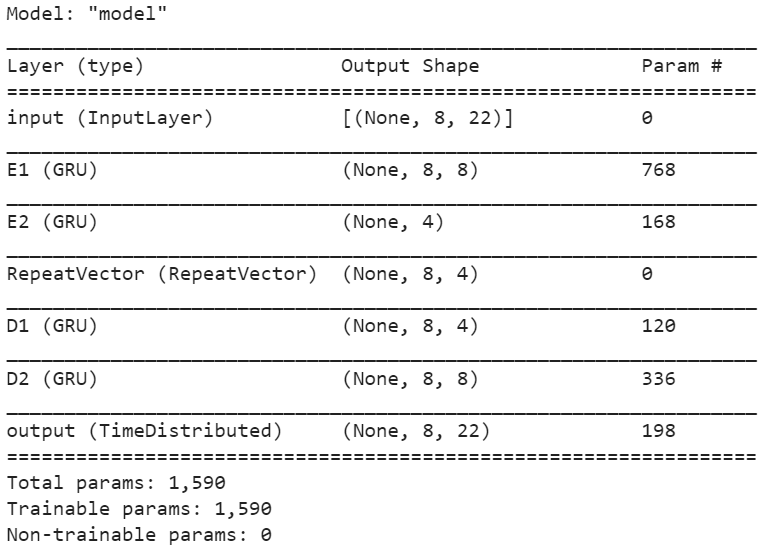

I have also attached the result of model.summary() below.

At the end I am extracting the second hidden layer outputs by running the below code.

def all_hidden_layers_output(iModel, dtset):

inp = iModel.input # input placeholder

outputs = [layer.output for layer in iModel.layers] # all layer outputs

functors = [K.function([inp], [out]) for out in outputs] # evaluation functions

layer_outs = [func([dtset]) for func in functors]

return layer_outs

hidden_state_train = all_hidden_layers_output(model, trainX)[2][0]

hidden_state_val = all_hidden_layers_output(model, valX)[2][0]

# remove zeros_columns:

hidden_state_train = hidden_state_train[:,~np.all(hidden_state_train==0.0, axis=0)]

hidden_state_val = hidden_state_val[:,~np.all(hidden_state_val==0.0, axis=0)]

print(f"hidden_state_train.shape={hidden_state_train.shape}")

print(f"hidden_state_val.shape={hidden_state_val.shape}")

But I don't know why some of the units in this layer return zero all the time. I expect to get hidden_state_train and hidden_state_val as 2D numpy array with 4 non-zeros columns (based on the model.summary() information). Any help would be greatly appreciated.

CodePudding user response:

This may be because of the dying relu problem. The relu is 0 for negative values. Have a look at this (https://towardsdatascience.com/the-dying-relu-problem-clearly-explained-42d0c54e0d24) explanation of the problem.