I've got a python package running in a container.

Is it best practice to install it in /opt/myapp within the container?

Should the logs go in /var/opt/myapp?

Should the config files go in /etc/opt/myapp?

Is anyone recommending writing logs and config files to /opt/myapp/var/log and /opt/myapp/config?

I notice google chrome was installed in /opt/google/chrome on my (host) system, but it didn't place any configs in /etc/opt/...

CodePudding user response:

Is it best practice to install it in /opt/myapp within the container?

I place my apps in my container images in /app. So in the dockerfile I do

WORKDIR /app at the beginning

Should the logs go in /var/opt/myapp?

In container world the best practice is that your application logs go into stdout, stderr and not into files inside the container because containers are ephemeral by design and should be treated that way so when a container is stopped and deleted all of its data on its filesystem is gone.

On local docker development environment you can see the logs with docker logs and you can:

start a container named gettingstarted from the image docker/getting-started:

docker run --name gettingstarted -d -p 80:80 docker/getting-started

redirect docker logs output to a local file on the docker client (your machine from where you run the docker commands):

docker logs -f gettingstarted &> gettingstarted.log &

open http://localhost to generate some logs

read the log file with tail realtime or with any text viewer program:

tail -f gettingstarted.log

Should the config files go in /etc/opt/myapp?

Again, you can put the config files anywhere you want, I like to keep them together with my app so in the /app directory, but you should not modify the config files once the container is running. What you should do is instead pass the config variables to the container as environment variables at startup with the -e flag, for example to create MYVAR variable with MYVALUE value inside the container start it this way:

docker run --name gettingstarted -d -p 80:80 -e MYVAR='MYVALUE' docker/getting-started

exec into the container to see the variable:

docker exec -it gettingstarted sh

/ # echo $MYVAR

MYVALUE

From here it is the responsibility of your containerized app to understand these variables and translate them to actual application configurations. Some/most programming languages support reaching env vars from inside the code at runtime but if this is not an option then you can do an entrypoint.sh script that updates the config files with the values supplied through the env vars. A good example for this is the postgresql entrypoint:

As a summary, in Linux you could use any folder for your apps, bearing in mind:

- Don't use system folders : /bin /usr/bin /boot /proc /lib

- Don't use file system folder: /media / mnt

- Don't use /tmp folder because it's content is deleted on each restart

- As you researched, you could imitate chrome and use /opt

- You could create your own folder like /acme if there are several developers entering to the machine, so you could tell them: "No matter the machine or the application, all the custom content of our company will be in /acme". Also this help you if you are a security paranoid because will be able to guess where your application is. Any way, if the devil has access to your machine, is just a matter of time to find all.

- You could use fine grained permissions to keep safe the chosen folder

Log Folder

Similar to the previous paragraph:

- You could store your logs the standard /var/log/acme.log

- Or create your own company standard

- /acme/log/api.log

- /acme/webs/web1/app.log

Config Folder

This is the key for devops.

In a traditional, ancient and manually deployments, some folders were used to store the apps configurations like:

- /etc

- $HOME/.acme/settings.json

But in the modern epoch and if you are using Docker, you should not store manually your settings inside of container or in the host. The best way to have just one build and deploy n times (dev, test, staging, uat, prod, etc) is using environment variables.

One build , n deploys and env variables usage are fundamental for devops and cloud applications, Check the famous https://12factor.net/

- III. Config: Store config in the environment

- V. Build, release, run: Strictly separate build and run stages

And also is a good practice on any language. Check this Heroku: Configuration and Config Vars

So your python app should not read or expect a file in the filesystem to load its configurations. Maybe for dev, but no for test and prod.

Your python should read its configurations from env variables

import os

print(os.environ['DATABASE_PASSWORD'])

And then inject these values at runtime:

docker run -it -p 8080:80 -e DATABASE_PASSWORD=changeme my_python_app

And in your developer localhost,

export DATABASE_PASSWORD=changeme

python myapp.py

Before the run of your application and in the same shell

Config of a lot pf apps

The previous approach is an option for a couple of apps. But if you are driven to microservices and microfrontends, you will have dozens of apps on several languages. So in this case, to centralize the configurations you could use:

- spring cloud

- zookeeper

- https://www.vaultproject.io/

- https://www.doppler.com/

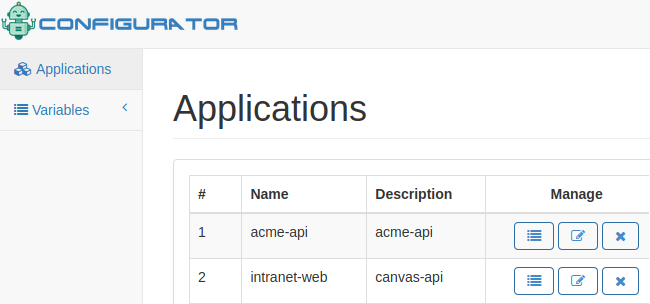

Or the Configurator (I'm the author)