My probleme is i have a code that gives filter column and values in a list as parameters

val vars = "age IN ('0')"

val ListPar = "entered_user,2014-05-05,2016-10-10;"

//val ListPar2 = "entered_user,2014-05-05,2016-10-10;revenue,0,5;"

val ListParser : List[String] = ListPar.split(";").map(_.trim).toList

val myInnerList : List[String] = ListParser(0).split(",").map(_.trim).toList

if (myInnerList(0) == "entered_user" || myInnerList(0) == "date" || myInnerList(0) == "dt_action"){

responses.filter(vars " AND " responses(myInnerList(0)).between(myInnerList(1), myInnerList(2)))

}else{

responses.filter(vars " AND " responses(myInnerList(0)).between(myInnerList(1).toInt, myInnerList(2).toInt))

}

well for all the fields except the one that contains date the functions works flawless but for fields that have date it throws an error

Note : I'm working with parquet files

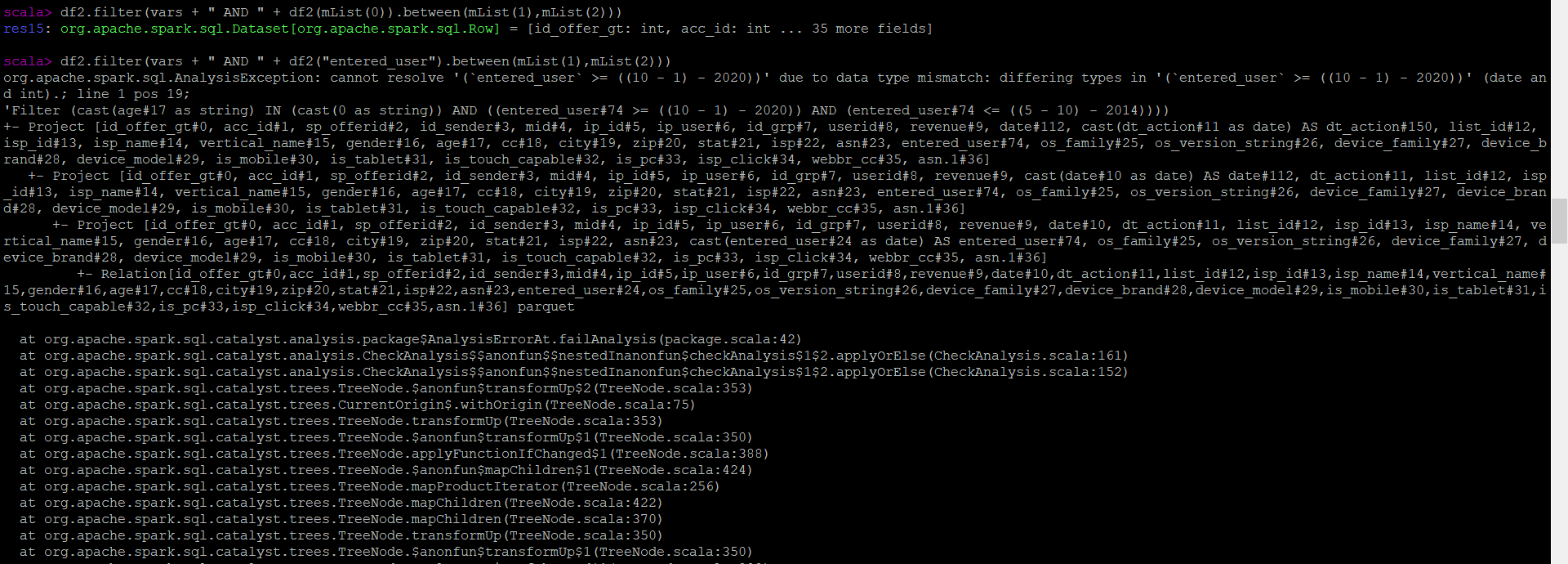

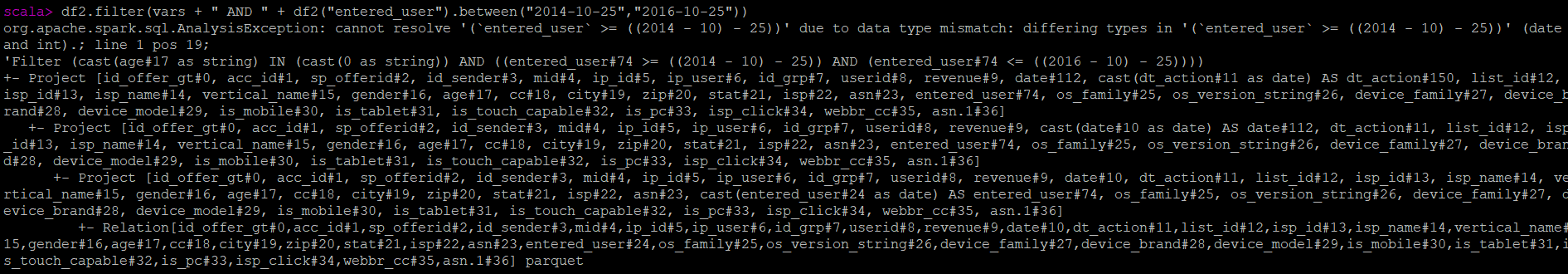

here is the error

when i try to write it manually i get the same

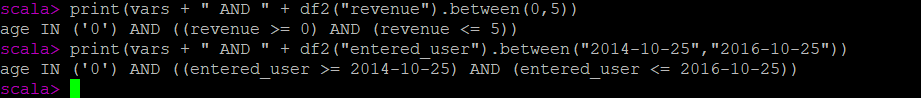

here is how the query it sent to the sparkSQL

the first one where there is revenue it works but the second one doesn't work

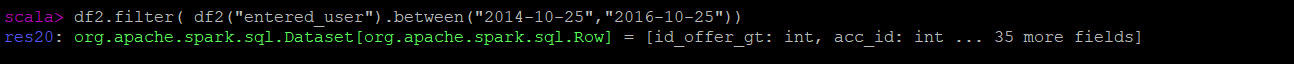

and when i try to just filter with dates without the value of "vars" which contains other columns, it works

CodePudding user response:

Well my issue is that i was mixing between sql and spark and when i tried to concatenate sql query which is my variable "vars" whith df.filter() and especially when i used between operator it was giving an output format unrocognised by sparksql which is

age IN ('0') AND ((entered_user >= 2015-01-01) AND (entered_user <= 2015-05-01))

it might seems correct but after looking in sql documentation it was missing parenthesese(in vars) it needed to be

(age IN ('0')) AND ((entered_user >= 2015-01-01) AND (entered_user <= 2015-05-01))

well the solution is i needed to concatenate those correctly so to do that i must to add " expr " to the variable vars which will result the desire syntaxe

responses.filter(expr(vars) && responses(myInnerList(0)).between(myInnerList(1), myInnerList(2)))