Quick background. I have a string of words - I separate out those words into a List (I've tried HashSet it doesn't make any difference - and you lose the ordered nature of a List).

I then manipulate the original words in many dull ways - and create thousands of "new strings" - all of these strings are in a StringBuilder which has been set .ToString();

At the end of the manipulation, I want to QC those new strings - and be sure that every word that was in the original set - is still somewhere in those new strings and I have not accidentally lost a word.

That original string, can run to hundreds of individual words.

Short Example:

List<string> uniqueWords = new List<string> { "two", "three", "weather sunday" };

string final = "two and tomorrow\n\rtwo or wednesday\n\rtwo with thursday\n\rtwo without friday\n\rthree gone tomorrow\n\rthree weather saturday\n\rthree timely sunday";

The output string can run to tens of millions of characters, millions of words, 200,000 rows of data (when split). You may notice that there are words that are actually two words separated by a space - so I cannot simply split out the individual words by splitting on the space as comparing them to the original would fail, and I need to confirm the words are exactly as they appeared originally - having weather somewhere and sunday somewhere - is not the same as having 'weather sunday' - for my purposes.

The the code I have tried so far and have benchmarked:

First attempt:

var allWords = uniqueWords.Where(substring => final.Contains(substring, StringComparison.CurrentCultureIgnoreCase)).ToList();

Second Attempt:

List<string> removeableList = new(uniqueWords);

foreach (var item in uniqueWords)

{

if (removeableList.Count == 0)

{

break;

}

if (final.Contains(item))

{

removeableList.Remove(item);

}

}

Third Attempt:

List<string> removeableList = new(uniqueWords);

for (int i = uniqueWords.Count; i >= 0; i--)

{

if (removeableList.Count == 0)

{

break;

}

if (final.Contains(uniqueWords[i]))

{

removeableList.Remove(uniqueWords[i]);

}

}

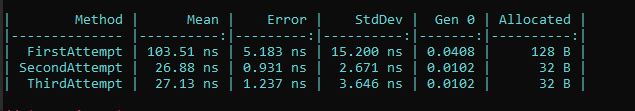

These are the results:

These results are repeatable, though I will say that the First Attempt tends to fluctuate quite a lot while the Second and Third Attempts tend to remain at about the same level - the Third Attempt does seem to do better than the Second rather consistently.

Are there any options that I am missing?

I have tried it using a Regex Matches collection into a HashSet - oh that was bad, 4 times worse than the First Attempt.

If there is a way to improve the performance on this task I would love to find it.

CodePudding user response:

Your attempt #1 uses CurrentCultureIgnoreCase which will be slow. But even after removing that, you are adding to the list, rather than removing, and therefore the list might need to be resized.

You are also measuring two different things: option #1 is getting the list of words which are in final, the others get the list of words which are not.

Further options include:

- Use

List.RemoveAll

List<string> remainingWords = new(uniqueWords);

remainingWords.RemoveAll(final.Contains); // use delegate directly, without anonymous delegate

- Use a pre-sized list and use Linq

List<string> remainingWords = new(uniqueWords.Length);

remainingWords.AddRange(uniqueWords.Where(s => !final.Contains(s)));

Each of these two options can be flipped depending on what result you are trying to achieve, as mentioned.

List<string> words = new(uniqueWords);

words.RemoveAll(s => !final.Contains(s));

List<string> words = new(uniqueWords.Length);

words.AddRange(uniqueWords.Where(final.Contains)); // use delegate directly, without anonymous delegate

CodePudding user response:

@Charlieface, thanks for that - I tried those, I think you have a point about adding to a list - as that appears much slower. For me it doesn't matter whether it is adding or removing, the result is a True/False return - whether the list is empty or of the size of the original list.

Sixth Attempt:

List<string> removeableList = new(uniqueWords.Count);

removeableList.AddRange(uniqueWords.Where(s => !parsedTermsComplete!.Contains(s)));

Seventh Attempt:

List<string> removeableList = new(uniqueWords);

removeableList.RemoveAll(parsedTermsComplete!.Contains);

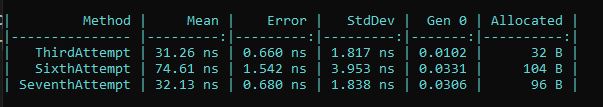

Results in comparison to Third Attempt (fastest generally):

The adding does appear slower - and memory is a little higher for the RemoveAll but timing is consistent - bearing in mind it fluctuates depending on what Windows decides to do at any given moment...

Here is an interesting implementation of the AhoCorasickTree method - which I saw mentioned on this site somewhere else.

My knowledge on this is extremely limited so this may not be a good implementation at all - I am not saying it is a good implementation just that it works - this comes from a nuget package, but I am unsure on SO's policy on nuget package links, so won't link for now. In testing, creating an array was faster than creating a list.

Eighth Attempt:

var wordArray = uniqueWords.ToArray();

int i = uniqueWords.Count - 1;

foreach (var item in wordArray)

{

var keyWords = new AhoCorasickTree(new[] { item });

if (keyWords.Contains(parsedTermsComplete))

{

uniqueWords.RemoveAt(i);

}

i--;

}

I noticed in testing that creating a "removableList" was actually slower than creating a removableArray (found this out implementing the above Aho run). I updated the Third Attempt to incorporate this:

var removeableArray = uniqueWords.ToArray();

for (int i = removeableArray.Length -1; i >= 0; i--)

{

if (!uniqueWords.Any())

{

break;

}

if (parsedTermsComplete!.Contains(removeableArray[i]))

{

uniqueWords.RemoveAt(i);

}

}

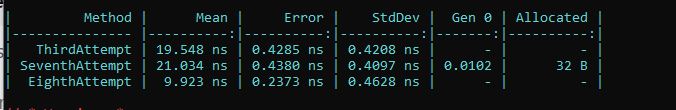

The Benchmarks come out like this, the Third Attempt is updated to an array, the Seventh Attempt is the AhoCorasick implementation on a list, and the Eighth Attempt is the AhoCorasick implementation on an Array.

The ToArray - does seem faster than List, which is good to know.

My only issue with the AhoCorasick is that in practice - in a WASM application - this is actually much slower, so not a good option for me - but I put it here because it does seem to be much faster in Benchmarks (may be using multiple threads where WASM is limited to 1) and doesn't appear to allocate any memory, so might be useful to someone - interesting that the Third Attempt also appears to be allocated no memory when using an Array implementation whereas on a list it was allocated.