I'm trying to figure out how to program a certain type of load to the CPU that makes it work constantly but with average stress.

The only approach I know how to load a CPU with some work to do which wouldn't be at its maximum possible performance is when we alternate the part of giving the CPU something to do with sleep for some time. E.g. to achieve 20% CPU usage, do some computation which would take e.g. 0.2 seconds and then sleep for 0.8 seconds. Then the CPU usage will be roughly 20%.

However this essentially means the CPU will be jumping between max performance to idle all the time.

I wrote a small Python program where I'm making a process for each CPU core, set its affinity so each process runs on a designated core, and I'm giving it some absolutely meaningless load:

def actual_load_cycle():

x = list(range(10000))

del x

while repeating a call to this procedure in cycle and then sleeping for some time to ensure the working time is N% of total time:

while 1:

timer.mark_time(timer_marker)

for i in range(coef):

actual_load_cycle()

elapsed = timer.get_time_since(timer_marker)

# now we need to sleep for some time. The elapsed time is CPU_LOAD_TARGET% of 100%.

time_to_sleep = elapsed / CPU_LOAD_TARGET * (100 - CPU_LOAD_TARGET)

sleep(time_to_sleep)

It works well, giving the load within 7% of desired value of CPU_LOAD_TARGET - I don't need a precise amount of load. But it sets the temperature of the CPU very high, with CPU_LOAD_TARGET=35 (real CPU usage reported by the system is around 40%) the CPU temps go up to 80 degrees. Even with the minimal target like 5%, the temps are spiking, maybe just not as much - up to 72-73.

I believe the reason for this is that those 20% of time the CPU works as hard as it can, and it doesn't get cooler fast enough while sleeping afterwards.

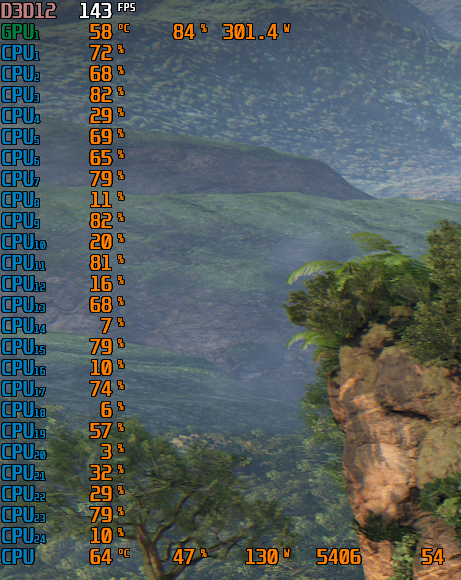

But when I'm running a game, like Uncharted 4, the CPU usage as measured by MSI Afterburner is 42-47%, but the temperatures stay under 65 degrees.

How can I achieve similar results, how can I program such load to make CPU usage high but the work itself would be quite relaxed, as is done e.g. in the games?

Thanks!

CodePudding user response:

The heat dissipation of a CPU is mainly dependent of its power consumption which is very dependent of the workload, and more precisely the instruction being executed and the number of active cores. Modern processors are very complex so it is very hard to predict the power consumption based on a given workload, especially when the executed code is a Python code executed in the CPython interpreter.

There are many factors that can impact the power consumption of a modern processors. The most important one is frequency scaling. Mainstream x86-64 processors can adapt the frequency of a core based on the kind of computation done (eg. use of wide SIMD floating-point vectors like the ZMM registers of AVX-512F VS scalar 64-bit integers), the number of active cores (the higher the number of core the lower the frequency), the current temperature of the core, the time executing instructions VS sleeping, etc. On modern processor, the memory hierarchy can take a significant amount of power so operations involving the memory controller and more generally the RAM can eat more power than the one operating on in-core registers. In fact, regarding the instructions actually executed, the processor needs to enable/disable some parts of its integrated circuit (eg. SIMD units, integrated GPU, etc.) and not all can be enabled at the same time due to TDP constraints (see Dark silicon). Floating-point SIMD instructions tend to eat more energy than integer SIMD instructions. Something even weirder: the consumption can actually be dependent of the input data since transistors may switch more frequently from one state to another with some data (researchers found this while computing matrix multiplication kernels on different kind of platforms with different kind of input). The power is automatically adapted by the processor since it would be insane (if even possible) for engineers to actually consider all possible cases and all possible dynamic workload.

One of the cheapest x86 instruction is NOP which basically mean "do nothing". That being said, the processor can run at the highest turbo frequency so to execute a loop of NOP resulting in a pretty high power consumption. In fact, some processor can run the NOP in parallel on multiple execution units of a given core keeping busy all the available ALUs. Funny point: running dependent instructions with a high latency might actually reduce the power consumption of the target processor.

The MWAIT/MONITOR instructions provide hints to allow the processor to enter an implementation-dependent optimized state. This includes a lower-power consumption possibly due to a lower frequency (eg. no turbo) and the use of sleep states. Basically, your processor can sleep for a very short time so to reduce its power consumption and then be able to use a high frequency for a longer time due to a lower power / heat-dissipation before. The behaviour is similar to humans: the deeper the sleep the faster the processor can be after that, but the deeper the sleep the longer the time to (completely) wake up. The bad news is that such instruction requires very-high privileges AFAIK so you basically cannot use them from a user-land code. AFAIK, there are instructions to do that in user-land like UMWAIT and UMONITOR but they are not yet implemented except maybe in very recent processors. For more information, please read this post.

In practice, the default CPython interpreter consumes a lot of power because it makes a lot of memory accesses (including indirection and atomic operations), does a lot of branches that needs to be predicted by the processor (which has special power-greedy units for that), performs a lot of dynamic jumps in a large code. The kind of pure-Python code executed does not reflect the actual instructions executed by the processor since most of the time will be spent in the interpreter itself. Thus, I think you need to use a lower-level language like C or C to better control kind of workload to be executed. Alternatively, you can use JIT compiler like Numba so to have a better control while still using a Python code (but not a pure-Python one anymore). Still, one should keep in mind that the JIT can generate many unwanted instructions that can result in an unexpectedly higher power consumption. Alternatively, a JIT compiler can optimize trivial codes like a sum from 1 to N (simplified as just a N*(N 1)/2 expression).

Here is an example of code:

import numba as nb

def test(n):

s = 1

for i in range(1, n):

s = i

s *= i

s &= 0xFF

return s

pythonTest = test

numbaTest = nb.njit('(int64,)')(test) # Compile the function

pythonTest(1_000_000_000) # takes about 108 seconds

numbaTest(1_000_000_000) # takes about 1 second

In this code, the Python function takes 108 times more time to execute than the Numba function on my machine (i5-9600KF processor) so one should expect a 108 higher energy needed to execute the Python version. However, in practice, this is even worse: the pure-Python function causes the target core to consume a much higher power (not just more energy) than the equivalent compiled Numba implementation on my machine. This can be clearly seen on the temperature monitor:

Base temperature when nothing is running: 39°C

Temperature during the execution of pythonTest: 55°C

Temperature during the execution of numbaTest: 46°C

Note that my processor was running at 4.4-4.5 GHz in all cases (due to the performance governor being chosen). The temperature is retrieved after 30 seconds in each cases and it is stable (due to the cooling system). The function are run in a while(True) loop during the benchmark.

Note that game often use multiple cores and they do a lot of synchronizations (at least to wait for the rendering part to be completed). A a result, the target processor can use a slightly lower turbo frequency (due to TDP constraints) and have a lower temperature due to the small sleeps (saving energy).

CodePudding user response:

1.Reboot. First step: save your work and restart your PC. ... 2.End or Restart Processes. Open the Task Manager (CTRL SHIFT ESCAPE). ... 3.Update Drivers. ... 4.Scan for Malware. ... 5.Power Options. ... 6.Find Specific Guidance Online. ... 7.Reinstalling Windows.