Can't get the row format correct when using pandas read_html(). I'm looking for adjustments either to the method itself or the underlying html (scraped via bs4) to get the desired output.

Current output:

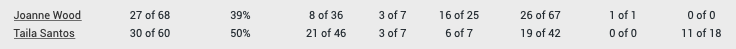

(note it is 1 row containing two types of data. ideally it should be separated to 2 rows as below)

(note it is 1 row containing two types of data. ideally it should be separated to 2 rows as below)

Desired:

code to replicate the issue:

import requests

import pandas as pd

from bs4 import BeautifulSoup # alternatively

url = "http://ufcstats.com/fight-details/bb15c0a2911043bd"

df = pd.read_html(url)[-1] # last table

df.columns = [str(i) for i in range(len(df.columns))]

# to get the html via bs4

headers = {

"Access-Control-Allow-Origin": "*",

"Access-Control-Allow-Methods": "GET",

"Access-Control-Allow-Headers": "Content-Type",

"Access-Control-Max-Age": "3600",

"User-Agent": "Mozilla/5.0 (X11; Ubuntu; Linux x86_64; rv:52.0) Gecko/20100101 Firefox/52.0",

}

req = requests.get(url, headers)

soup = BeautifulSoup(req.content, "html.parser")

table_html = soup.find_all("table", {"class": "b-fight-details__table"})[-1]

CodePudding user response:

How to (quick) fix with beautifulsoup

You can create a dict with the headers from the table and then iterate over each td to append the list of values stored in the p:

data = {}

header = [x.text.strip() for x in table_html.select('tr th')]

for i,td in enumerate(table_html.select('tr:has(td) td')):

data[header[i]] = [x.text.strip() for x in td.select('p')]

pd.DataFrame.from_dict(data)

Example

import requests

import pandas as pd

from bs4 import BeautifulSoup # alternatively

url = "http://ufcstats.com/fight-details/bb15c0a2911043bd"

# to get the html via bs4

headers = {

"Access-Control-Allow-Origin": "*",

"Access-Control-Allow-Methods": "GET",

"Access-Control-Allow-Headers": "Content-Type",

"Access-Control-Max-Age": "3600",

"User-Agent": "Mozilla/5.0 (X11; Ubuntu; Linux x86_64; rv:52.0) Gecko/20100101 Firefox/52.0",

}

req = requests.get(url, headers)

soup = BeautifulSoup(req.content, "html.parser")

table_html = soup.find_all("table", {"class": "b-fight-details__table"})[-1]

data = {}

header = [x.text.strip() for x in table_html.select('tr th')]

for i,td in enumerate(table_html.select('tr:has(td) td')):

data[header[i]] = [x.text.strip() for x in td.select('p')]

pd.DataFrame.from_dict(data)

Output

| Fighter | Sig. str | Sig. str. % | Head | Body | Leg | Distance | Clinch | Ground |

|---|---|---|---|---|---|---|---|---|

| Joanne Wood | 27 of 68 | 39% | 8 of 36 | 3 of 7 | 16 of 25 | 26 of 67 | 1 of 1 | 0 of 0 |

| Taila Santos | 30 of 60 | 50% | 21 of 46 | 3 of 7 | 6 of 7 | 19 of 42 | 0 of 0 | 11 of 18 |

CodePudding user response:

Similar idea to use enumerate to determine number of rows, but use :-soup-contains to target table, then nth-child selector to extract relevant row during list comprehension. pandas to convert resultant list of lists into a DataFrame. Assumes rows are added in same pattern as current 2.

from bs4 import BeautifulSoup as bs

import requests

import pandas as pd

r = requests.get('http://ufcstats.com/fight-details/bb15c0a2911043bd')

soup = bs(r.content, 'lxml')

table = soup.select_one(

'.js-fight-section:has(p:-soup-contains("Significant Strikes")) table')

df = pd.DataFrame(

[[i.text.strip() for i in table.select(f'tr:nth-child(1) td p:nth-child({n 1})')]

for n, _ in enumerate(table.select('tr:nth-child(1) > td:nth-child(1) > p'))], columns=[i.text.strip() for i in table.select('th')])

print(df)