I have a large dataset and some columns have String data-type. Because of typo mistake, some of the cells have None values but written in different styles (with small or capital letters, with or without space, with or without bracket, etc).

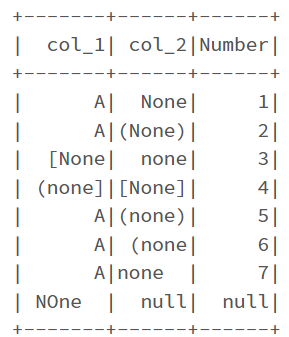

I want to count the No. of all those values (excluding Null values) in all columns. A sample dataset is below:

data = [("A", "None", 1), \

("A", "(None)", 2), \

("[None", "none", 3), \

("(none]", "[None]", 4), \

("A", "(none)", 5), \

("A", "(none", 6), \

("A", "none ", 7), \

(" NOne ", None, None), \

]

# Create DataFrame

columns= ["col_1", "col_2", "Number"]

df = spark.createDataFrame(data = data, schema = columns)

The expected result is:

{'col_1': 3, 'col_2': 7, 'Number': 0}

Any idea how to do that by PySpark?

CodePudding user response:

The logic is:

- Use regex to remove all kinds of opening brackets and closing brackets from start and end of the column value.

- Trim extra spaces, convert to lower and compare to "none".

- Count the filtered records for each column.

count_result = {}

for c in df.columns:

count_result[c] = df.select(c).filter(F.lower(F.trim(F.regexp_replace(c, r"(?:^\[|^\(|^\<|^\{|\]$|\)$|\>$|\}$)", ""))) == "none") \

.count()

print(count_result)

Output:

{'col_1': 3, 'col_2': 7, 'Number': 0}