I am looking to use Azure Data Factory to import a number of users from a third party source CSV file into the D365 user table/entity. This is usually straight forward but on this occasion I have a complication. The D365 users table/entity is already populated. The source CSV user file will have a mixture of users that are already in the D365 table/entity and others that are not.

What I would like to do is ensure the users in my source file that are already in the D365 table are not copied over as this would create duplicates.

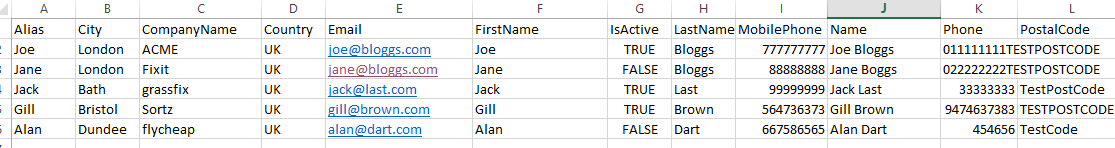

Source CSV FILE

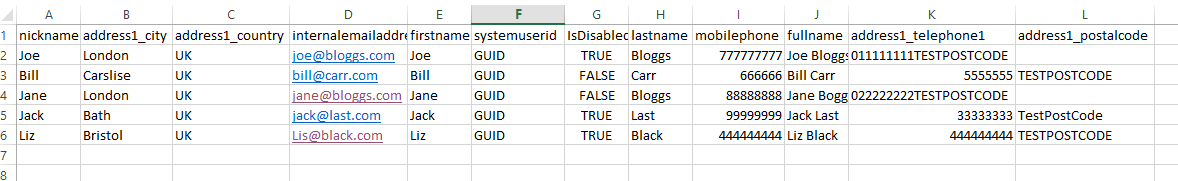

Existing D365 User table (subset of fields just to illustrate)

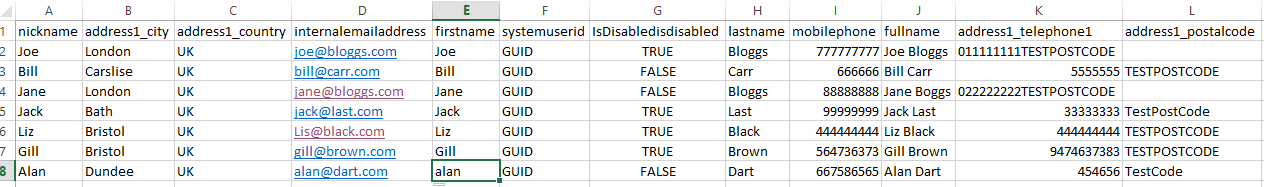

Updated D365 table with two new record added from source csv

From what I can see there are two possible solutions in Azure Data Factory

Configure the D365 sink to do it. e.g configure th sink in order to ignore records that match on a certain column? Is it possible to configure the sink in some way to accomplish this?

Pull in the D365 table/entity as a source and use it to filter my source CSV to remove user records that already exist in D365 perhaps by using a common field such as fullname to identify such records. This would ensure I only try to import new users.

I have had a look into both methods but have been struggling to find a way to implement them.

I'd like to think the scenario I have outlined above is not uncommon and there are tried and tested methods to filter out records from a source CSV that already exists in the target D365 table?

I'd apprecate any help/suggestion to help me achieve this

CodePudding user response:

You can use any one of these 2 approaches.

Use Azure data flow and Upsert the data to sink using Upsert as your writeBehavior in your dynamics sink transformation. You can refer to this SO link for information to use the Upsert method in the Azure data factory.

Pull CSV data as source1 and D365 table data as source2 and connect both sources to join transformation with left outer join. Then you can use filter transformation to filter out the NULL records of source2 (or right table). The output of filter transformation will be only new records which can be directly passed to D365 sink transformation. You can refer to this SO thread to similar process.

CodePudding user response:

When we do data extract as yours from Synapse into Azure, upsert is not working correctly, many times go into a dead loop What we do:

- Create a temp table in the target

- select source table data and extract it into the target temp table

- run stored procedure to update, insert and delete target real table based on the temp table

Here is the stored procedure query, hope it can help you:

UPDATE t

SET

t.bcfsa_teamid = syn.bcfsa_teamid

,t.bcfsa_account = syn.bcfsa_account

,t.bcfsa_name = syn.bcfsa_name

,t.bcfsa_contactteamownerid = syn.bcfsa_contactteamownerid

FROM website.bcfsa_team t

INNER JOIN syn.bcfsa_team syn on t.id = syn.id;

INSERT website.bcfsa_team (id,bcfsa_teamid,bcfsa_account,bcfsa_name,bcfsa_contactteamownerid)

SELECT id,bcfsa_teamid,bcfsa_account,bcfsa_name,bcfsa_contactteamownerid

FROM syn.bcfsa_team

EXCEPT

SELECT id,bcfsa_teamid,bcfsa_account,bcfsa_name,bcfsa_contactteamownerid

FROM website.bcfsa_team;

DELETE FROM website.bcfsa_team

WHERE Id NOT in (SELECT id FROM syn.bcfsa_team);

TRUNCATE TABLE syn.bcfsa_team;