I am modifying the 'train model' function below so to plot loss and accuracy graphs at every epochs during traning

def train_model(model, criterion, optimizer, scheduler, num_epochs=25):

since = time.time()

best_model_wts = copy.deepcopy(model.state_dict())

best_acc = 0.0

losses=[]

accuracies=[]

y_loss = {} # loss history

y_loss['aug1_train'] = []

y_loss['valid'] = []

y_acc = {}

y_acc['aug1_train'] = []

y_acc['valid'] = []

x_epoch = []

fig = plt.figure()

ax0 = fig.add_subplot(121, title="loss")

ax1 = fig.add_subplot(122, title="accuracy")

for epoch in range(num_epochs):

print('Epoch {}/{}'.format(epoch, num_epochs - 1))

print('-' * 10)

# Each epoch has a training and validation phase

for phase in ['aug1_train', 'valid']:

if phase == 'aug1_train':

scheduler.step()

model.train() # Set model to training mode

else:

model.eval() # Set model to evaluate mode

running_loss = 0.0

running_corrects = 0

# Iterate over data.

for inputs, labels,paths in dataloaders[phase]:

inputs = inputs.to(device)

labels = labels.to(device)

# zero the parameter gradients

optimizer.zero_grad()

# forward

# track history if only in train

with torch.set_grad_enabled(phase == 'aug1_train'):

outputs = model(inputs)

_, preds = torch.max(outputs, 1)

loss = criterion(outputs, labels)

# backward optimize only if in training phase

if phase == 'aug1_train':

loss.backward()

optimizer.step()

# statistics

running_loss = loss.item() * inputs.size(0)

running_corrects = torch.sum(preds == labels.data)

epoch_loss = running_loss / dataset_sizes[phase]

epoch_acc = running_corrects.double() / dataset_sizes[phase]

print('{} Loss: {:.4f} Acc: {:.4f} '.format(

phase, epoch_loss, epoch_acc))

y_loss[phase].append(epoch_loss)

y_acc[phase].append(epoch_acc)

# deep copy the model

if phase == 'valid' and epoch_acc > best_acc:

best_acc = epoch_acc

best_model_wts = copy.deepcopy(model.state_dict())

def draw_curve(current_epoch):

x_epoch.append(current_epoch)

ax0.plot(x_epoch, y_loss['aug1_train'], 'bo-', label='train')

ax0.plot(x_epoch, y_loss['valid'], 'ro-', label='val')

ax1.plot(x_epoch, y_acc['aug1_train'], 'bo-', label='train')

ax1.plot(x_epoch, y_acc['valid'], 'ro-', label='val')

if current_epoch == 0:

ax0.legend()

ax1.legend()

fig.savefig(os.path.join('/content/drive/My Drive/Stanford40/Graphs', 'train.jpg'))

draw_curve(epoch)

if phase=='aug1_train':

losses.append(epoch_loss)

accuracies.append(epoch_acc)

print()

time_elapsed = time.time() - since

print('Training complete in {:.0f}m {:.0f}s'.format(

time_elapsed // 60, time_elapsed % 60))

print('Best val Acc: {:4f}'.format(best_acc))

# load best model weights

model.load_state_dict(best_model_wts)

return model,losses,accuracies

and I load the Densenet161 for traning as below

#Load Pretrained Densenet161 model

model_ft = models.densenet161(pretrained=True)

model_ft.classifier=nn.Linear(2208,11)

model_ft = model_ft.to(device)

criterion = nn.CrossEntropyLoss()

# Observe that all parameters are being optimized

opt = optim.SGD(model_ft.parameters(), lr=0.001, momentum=0.9)

# Decay LR by a factor of 0.1 every 7 epochs

sched = lr_scheduler.StepLR(opt, step_size=5, gamma=0.1)

Finally I run the code below to start training:

model_ft,losses,accuracies = train_model(model_ft, criterion,opt ,sched,num_epochs=30)

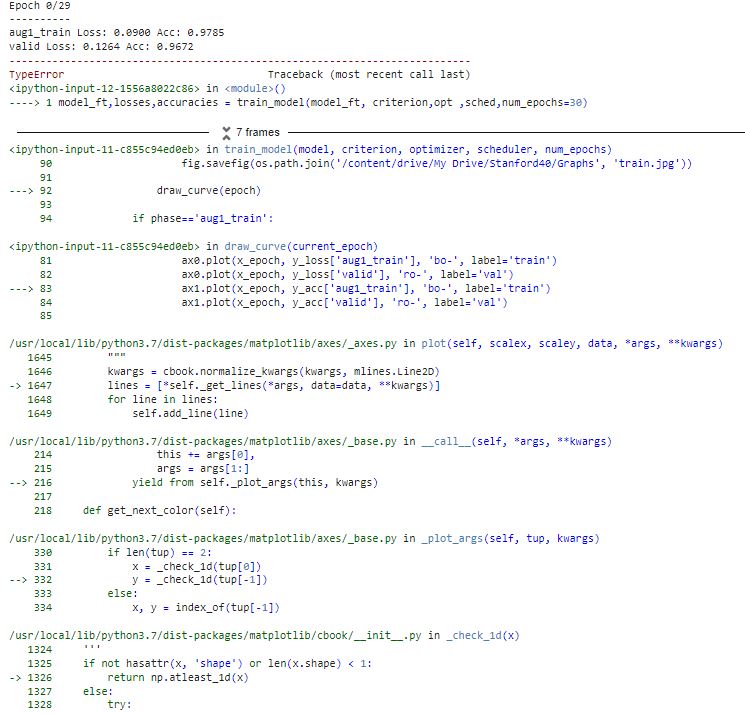

and got this error as in the picture below:

How can I modify the code to get away from this error by using tensor.cpu() ?

CodePudding user response:

It's hard to say without a detailed error backtrace. It is vague but the information it gives is that something somewhere is detecting a tensor and trying to convert it to numpy array not properly. My intuition tells me it comes from the matplotlib code in your visualization step. I believe it is trying to convert your loss terms.

You should convert them to lists after having performed back propagation...

y_loss[phase].append(epoch_loss.item())

y_acc[phase].append(epoch_acc.item())

CodePudding user response:

What if try to get item() here

running_corrects = torch.sum(preds == labels.data).item()

and remove double() when dividing?

epoch_acc = running_corrects / dataset_sizes[phase]