I have this string pattern in my Spark dataframe: 'Sep 14, 2014, 1:34:36 PM'.

I want to convert this to date or datetime format, using Databricks and Spark.

I've already tried the cast and to_date functions, but nothing works and I got null return everytime.

How can I do that?

Thanks in advance!

CodePudding user response:

If we have a created table like this:

var ds = spark.sparkContext.parallelize(Seq(

"Sep 14, 2014, 01:34:36 PM"

)).toDF("date")

Through the following statement:

ds = ds.withColumn("casted", to_timestamp(col("date"), "MMM dd, yyyy, hh:mm:ss aa"))

You get this result:

------------------------- -------------------

|date |casted |

------------------------- -------------------

|Sep 14, 2014, 01:34:36 PM|2014-09-14 13:34:36|

------------------------- -------------------

which should be useful to you. You can use to_date or other APIs that require a datetime format, good luck!

CodePudding user response:

Your date/time stamp string is incorrect. You have 1 instead of 01.

#

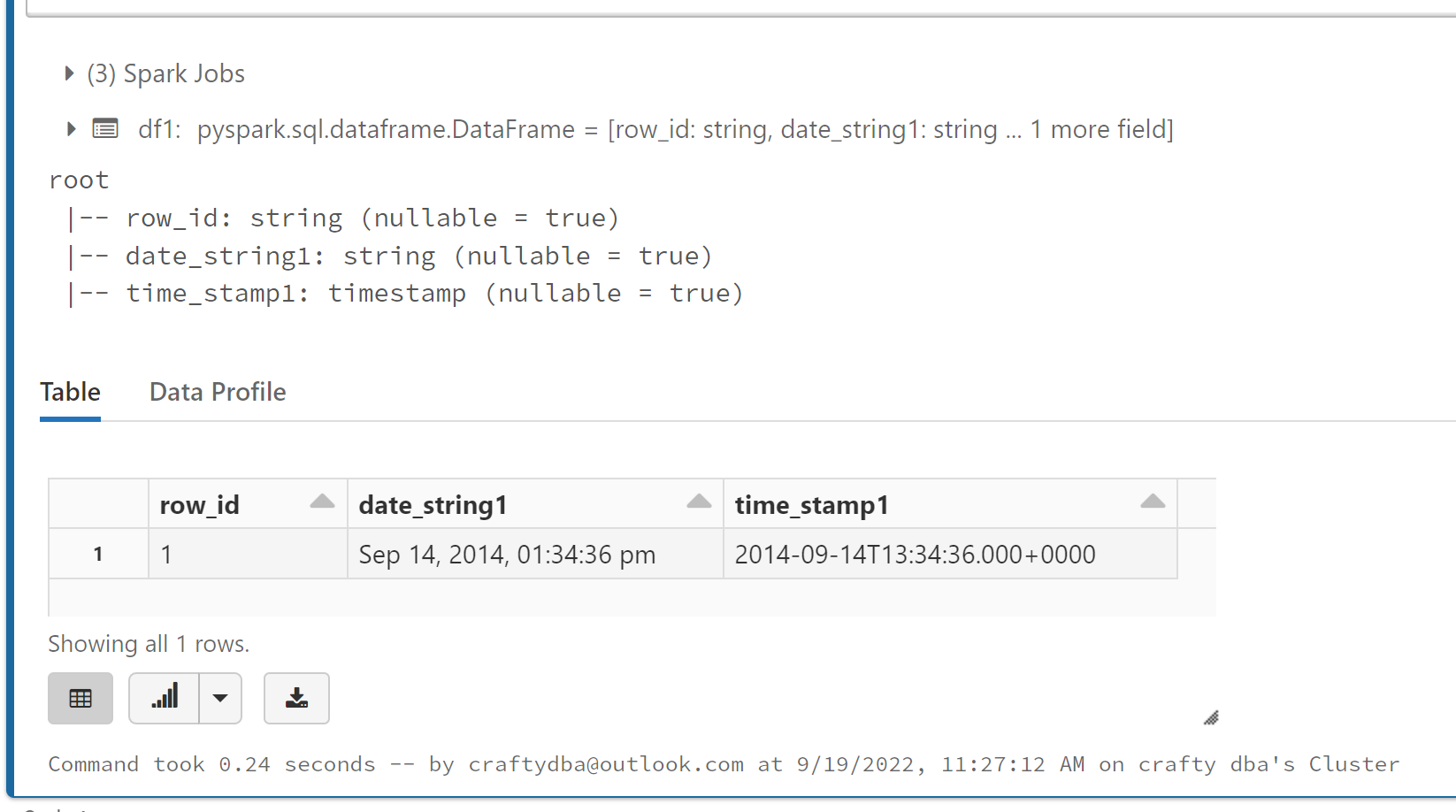

# 1 - Create sample dataframe view

#

# required library

from pyspark.sql.functions import *

# array of tuples - data

dat1 = [

("1", "Sep 14, 2014, 01:34:36 pm")

]

# array of names - columns

col1 = ["row_id", "date_string1"]

# make data frame

df1 = spark.createDataFrame(data=dat1, schema=col1)

# expand date range into list of dates

df1 = df1.withColumn("time_stamp1", to_timestamp(col("date_string1"), "MMM dd, yyyy, hh:mm:ss a"))

# show schema

df1.printSchema()

# show data

display(df1)

This code produces the correct answer.

If the data has 1:34:36, it fails. You can use a when clause to pick the correct conversion.