I'm trying to get the input weight on each layer, including the lstm 1, lstm 2, and weight after the attention layer, and want to display them using a heatmap. But when I run the code, the following error appears. What happened? Because the layer exists. Here is the code:

model.add(LSTM(32, input_shape=(n_timesteps,n_features), return_sequences=True))

#print weights

print(model.get_layer(LSTM).get_weights()[0])

model.add(LSTM(32, input_shape=(n_timesteps,n_features), return_sequences=True))

model.add(Dropout(0.1))

model.add(attention(return_sequences=False)) # receive 3D and output 2D

model.add(Dense(n_outputs, activation='softmax'))

model.summary()

model.compile(loss='categorical_crossentropy', optimizer='adam', metrics=['accuracy'])

# fit network

model.fit(trainX, trainy, epochs=epochs, batch_size=batch_size, verbose=verbose)

# evaluate model

_, accuracy = model.evaluate(testX, testy, batch_size=batch_size, verbose=0)

Attention layer:

class attention(Layer):

def __init__(self, return_sequences=True):

self.return_sequences = return_sequences

super(attention,self).__init__()

def build(self, input_shape):

self.W=self.add_weight(name="att_weight", shape=(input_shape[-1],1),

initializer="normal")

self.b=self.add_weight(name="att_bias", shape=(input_shape[1],1),

initializer="zeros")

super(attention,self).build(input_shape)

def call(self, x):

e = K.tanh(K.dot(x,self.W) self.b)

a = K.softmax(e, axis=1)

output = x*a

if self.return_sequences:

return output

return K.sum(output, axis=1)

And this is the error that appears:

ValueError: No such layer: <class 'keras.layers.recurrent_v2.LSTM'>. Existing layers are [<keras.layers.recurrent_v2.LSTM object at 0x7f7b5c215910>].

CodePudding user response:

You can get certain layer weights using model.layers after defining your whole model:

import tensorflow as tf

import seaborn as sb

import matplotlib.pyplot as plt

class attention(tf.keras.layers.Layer):

def __init__(self, return_sequences=True):

self.return_sequences = return_sequences

super(attention,self).__init__()

def build(self, input_shape):

self.W=self.add_weight(name="att_weight", shape=(input_shape[-1],1),

initializer="normal")

self.b=self.add_weight(name="att_bias", shape=(input_shape[1],1),

initializer="zeros")

super(attention,self).build(input_shape)

def call(self, x):

e = tf.keras.backend.tanh(tf.keras.backend.dot(x,self.W) self.b)

a = tf.keras.backend.softmax(e, axis=1)

output = x*a

if self.return_sequences:

return output

return tf.keras.backend.sum(output, axis=1)

model = tf.keras.Sequential()

model.add(tf.keras.layers.LSTM(32, input_shape=(5,10), return_sequences=True))

model.add(tf.keras.layers.LSTM(32, return_sequences=True))

model.add(tf.keras.layers.Dropout(0.1))

model.add(attention(return_sequences=False)) # receive 3D and output 2D

model.add(tf.keras.layers.Dense(3, activation='softmax'))

model.summary()

model.compile(loss='categorical_crossentropy', optimizer='adam', metrics=['accuracy'])

trainx = tf.random.normal((25, 5, 10))

trainy = tf.random.uniform((25, 3), maxval=3)

model.fit(trainx, trainy, epochs=5, batch_size=4)

lstm1_weights = model.layers[0].get_weights()[0]

lstm2_weights = model.layers[1].get_weights()[0]

attention_weights = model.layers[3].get_weights()[0]

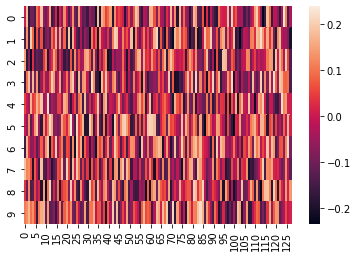

heat_map = sb.heatmap(lstm1_weights)

plt.show()

Model: "sequential_16"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

lstm_24 (LSTM) (None, 5, 32) 5504

lstm_25 (LSTM) (None, 5, 32) 8320

dropout_12 (Dropout) (None, 5, 32) 0

attention_12 (attention) (None, 32) 37

dense_12 (Dense) (None, 3) 99

=================================================================

Total params: 13,960

Trainable params: 13,960

Non-trainable params: 0

_________________________________________________________________

Epoch 1/5

7/7 [==============================] - 4s 10ms/step - loss: 5.5033 - accuracy: 0.4400

Epoch 2/5

7/7 [==============================] - 0s 8ms/step - loss: 5.4899 - accuracy: 0.5200

Epoch 3/5

7/7 [==============================] - 0s 9ms/step - loss: 5.4771 - accuracy: 0.4800

Epoch 4/5

7/7 [==============================] - 0s 9ms/step - loss: 5.4701 - accuracy: 0.5200

Epoch 5/5

7/7 [==============================] - 0s 8ms/step - loss: 5.4569 - accuracy: 0.5200

If you want to see how the weights of your layers change during training, you should define a callback as shown in this post.