I have 29,938,766 rows inside the VISITS table and the table looks like this

| USER_ID (INT) | VISITED_IN (DATETIME) |

|---|---|

| 65 | 2020-08-26 07:57:43 |

| 1182 | 2019-03-15 02:46:48 |

| 1564 | 2015-07-04 10:59:44 |

| 73 | 2021-03-18 00:25:08 |

| 3791 | 2017-10-17 12:22:45 |

| 51 | 2022-05-02 19:11:09 |

| 917 | 2017-11-20 15:32:06 |

| 3 | 2019-12-29 15:15:51 |

| 51 | 2015-02-08 17:48:30 |

| 1531 | 2020-08-05 08:44:55 |

| Etc... | Etc... |

When running this query, It takes 17-20 seconds and returns 63,514 (The user has 63,514 visits)

SELECT COUNT(*) FROM VISITS WHERE USER_ID = 917

When running this query, It takes 17-20 seconds and returns 193 (The user has 193 visits)

SELECT COUNT(*) FROM VISITS WHERE USER_ID = 716

The problem is the query always takes between 17-20 seconds for 29,938,766 rows even if the user has only 3, 50, 70, or 1,000,000 visits.

I think the problem is because it is looping for all rows?

The second query must be faster than the first query. It depends on the number of rows. But both queries take the same time!

What do you suggest to me to avoid this problem?

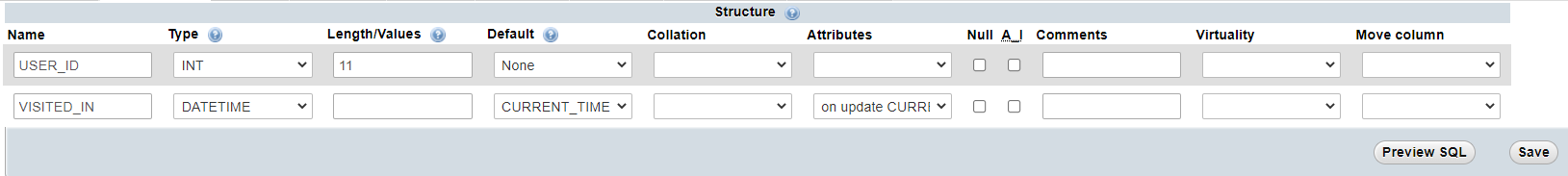

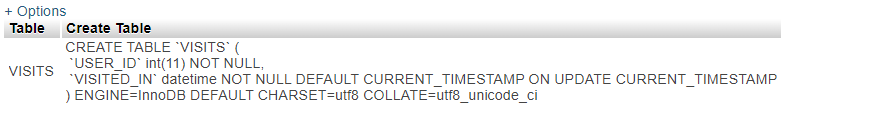

Table structure

Update: Here is a new suggested scenario:

When a user enters his or others' profile, He can see the number of profile visits and he can filter visits using this way

Last 24 hours

|

---> SELECT COUNT(*) FROM VISITS WHERE USER_ID = 5 AND VISITED_IN >= DATE_SUB(NOW(), INTERVAL 1 DAY);

Last 7 days

|

---> SELECT COUNT(*) FROM VISITS WHERE USER_ID = 5 AND VISITED_IN >= DATE_SUB(NOW(), INTERVAL 7 DAY);

Last 30 days

|

---> SELECT COUNT(*) FROM VISITS WHERE USER_ID = 5 AND VISITED_IN >= DATE_SUB(NOW(), INTERVAL 30 DAY);

All time

|

---> SELECT VISITS FROM USERS WHERE USER_ID = 5;

Also, I'll create a recurring event that executes this command every day.

DELETE FROM VISITS WHERE VISITED_IN <= DATE_SUB(NOW(), INTERVAL 30 DAY);

Also, I'll make sure to increase the VISITS column when adding a new row in the VISITS table.

UPDATE USERS SET VISITS = VISITS 1 WHERE ID = 5

CodePudding user response:

INDEX(user_id, visited_in)

will speed up all the SELECTs you mentioned. They will have to scan a chunk of the index; they will not have to "scan the whole table".

The DELETE needs `INDEX(visited_in). But it is problematic if you don't run it frequently enough. This is because Deleting thousands of rows at one time is potentially a problem. Consider running that delete at least once an hour.

If the table will be really big, etc, consider using Partitioning of a "time series". With that DROP PARTITION, is much faster. Partition

Any caching service will provide stale counts, but it will be faster some of the time.

It is "ok to hit the database every time someone opens a page", but only if the queries are efficient enough. Do Index.

In my Answer to your other Question, I explain how a Summary table can speed things up even more. However it assumes "last N days" is measured from midnight to midnight. Your current queries are NOW() - INTERVAL N DAY. That is messier to implement than midnight. Are you willing to change the meaning of "last N days"?

(Some INDEX basics...)

An important reason for any index is its ability to rapidly find the row(s) based on some column(s).

- An

INDEXis a list of keys mapping to rows. - A

UNIQUE INDEXis anINDEX, plus a uniqueness constraint -- implying that no two rows have the same value in the index. - The one and only

PRIMARY KEYis a unique index designated to uniquely identify every row in the table.

"key" and "index" are synonyms.

Indexes (in MySQL's InnoDB engine) are implemented as a BTree (actually a B Tree; see Wikipedia). In the case of the PK, the rest of the columns are sitting there with the PK value. In the case of "secondary" keys, the 'value' part of the BTree is the PK column(s).

Any index can contain 1 column or multiple columns (called "composite")

INDEX(lastname) is not likely to be UNIQUE

INDEX(lastname, firstname) is still not likely to be UNIQUE, but it is "composite".