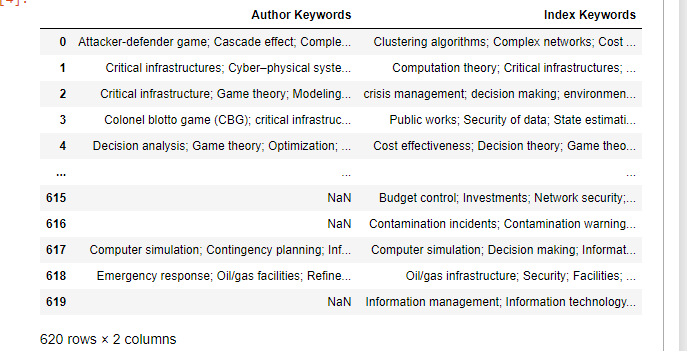

I have a bunch of keywords stored in a 620x2 pandas dataframe seen below. I think I need to treat each entry as its own set, where semicolons separate elements. So, we end up with 1240 sets. Then I'd like to be able to search how many times keywords of my choosing appear together. For example, I'd like to figure out how many times 'computation theory' and 'critical infrastructure' appear together as a subset in these sets, in any order. Is there any straightforward way I can do this?

CodePudding user response:

Not sure if this is considered straightforward, but it works. keyword_list is the list of paired keywords you want to search.

df['Author Keywords'] = df['Author Keywords'].fillna('').str.split(';\s*').apply(set)

df['Index Keywords'] = df['Index Keywords'].fillna('').str.split(';\s*').apply(set)

df.apply(lambda x : x.apply(lambda y : all([kw in y for kw in keyword_list]))).sum().sum()

CodePudding user response:

Use .loc to find if the keywords appear together.

Do this after you have split the data into 1240 sets. I don't understand whether you want to make new columns or just want to keep the columns as is.

# create a filter for keyword 1

filter_keyword_2 = (df['column_name'].str.contains('critical infrastructure'))

# create a filter for keyword 2

filter_keyword_2 = (df['column_name'].str.contains('computation theory'))

# you can create more filters with the same construction as above.

# To check the number of times both the keywords appear

len(df.loc[filter_keyword_1 & filter_keyword_2])

# To see the dataframe

subset_df = df.loc[filter_keyword_1 & filter_keyword_2]

.loc selects the conditional data frame. You can use subset_df=df[df['column_name'].str.contains('string')] if you have only one condition.

To the column split or any other processing before you make the filters or run the filters again after processing.